95% of researchers rate our articles as excellent or good

Learn more about the work of our research integrity team to safeguard the quality of each article we publish.

Find out more

ORIGINAL RESEARCH article

Front. Digit. Health , 13 February 2025

Sec. Digital Mental Health

Volume 7 - 2025 | https://doi.org/10.3389/fdgth.2025.1509415

This article is part of the Research Topic United in Diversity: Highlighting Themes from the European Society for Research on Internet Interventions 7th Conference View all 8 articles

Background: Despite the effectiveness and potential of digital mental health interventions (DMHIs) in routine care, their uptake remains low. In Germany, digital mental health applications (DiGA), certified as low-risk medical devices, can be prescribed by healthcare professionals (HCPs) to support the treatment of mental health conditions. The objective of this proof-of-concept study was to evaluate the feasibility of using the Multiphase Optimization Strategy (MOST) framework when assessing implementation strategies.

Methods: We tested the feasibility of the MOST by employing a 24 exploratory retrospective factorial design on existing data. We assessed the impact of the implementation strategies (calls, online meetings, arranged and walk-in on-site meetings) individually and in combination, on the number of DiGA activations in a non-randomized design. Data from N = 24,817 HCPs were analyzed using non-parametric tests.

Results: The results primarily demonstrated the feasibility of applying the MOST to a non-randomized setting. Furthermore, analyses indicated significant differences between the groups of HCPs receiving specific implementation strategies [χ2 (15) = 1,665.2, p < .001, ɛ2 = 0.07]. Combinations of implementation strategies were associated with significantly more DiGA activations. For example, combinations of arranged and walk-in on-site meetings showed higher activation numbers (e.g., Z = 10.60, p < 0.001, χ2 = 1,665.24) compared to those receiving other strategies. We found a moderate positive correlation between the number of strategies used and activation numbers (r = 0.30, p < 0.001).

Discussion and limitations: These findings support the feasibility of using the MOST to evaluate implementation strategies in digital mental health care. It also gives an exploratory example on how to conduct factorial designs with information on implementation strategies. However, limitations such as non-random assignment, underpowered analysis, and varying approaches to HCPs affect the robustness and generalizability of the results. Despite these limitations, the results demonstrate that the MOST is a viable method for assessing implementation strategies, highlighting the importance of planning and optimizing strategies before their implementation. By addressing these limitations, healthcare providers and policymakers can enhance the adoption of digital health innovations, ultimately improving access to mental health care for a broader population.

Despite the proven effectiveness of various treatment options for mental health conditions [e.g., (1–3)], a considerable number of individuals with mental health conditions remain without appropriate care, resulting in a significant treatment gap (4, 5). This highlights the significant ongoing burden that mental health conditions pose on global health (6). Digital mental health interventions (DMHIs) can bridge the treatment gap by increasing access to support and resources (7), overcoming barriers like cost, time, and attitudinal preferences for self-management (8). However, the uptake of digital mental health interventions by healthcare professionals (HCPs) and patients outside of research studies is often low (9).

To increase patient access to digital health interventions, the German parliament passed the Digital Care Act (DVG) in 2019. This law established specific mobile and web applications [Digitale Gesundheitsanwendungen (DiGA)] as an integral part of the German healthcare system (10). DiGA are CE-marked (Conformité Européenne) medical devices of low-risk classes that can be prescribed to support insured persons in the treatment of illnesses. The CE certificate indicates that products sold within the European Economic Area (EEA) have been evaluated to ensure compliance with high safety, health, and environmental protection standards (11). DiGA must pass safety, functionality, data protection, and security tests at the Federal Institute for Drugs and Medical Devices [Bundesinstitut für Arzneimittel und Medizinprodukte (BfArM)] and show a medical benefit and patient-relevant structural and procedural improvements (12). DiGA cover a wide range of treatments, including mental health treatments and can be prescribed by HCPs (13). For example, the DIGA HelloBetter Stress and Burnout is a digital program to manage stress-related symptoms (14).

Despite the effectiveness of DMHIs as demonstrated by numerous randomized controlled trials (RCTs, e.g. (15–18), and the integration of DiGA into the German healthcare system, DiGA are not yet widely adopted. The report from Techniker Krankenkasse shows that by June 2023, 12% of physicians (22,200 out of 185,000) had prescribed DiGA. General practitioners accounted for 38% of prescriptions, while psychotherapists and psychiatrists made up 15%. Additionally, 74% of prescribers issued a maximum of two DiGA prescriptions (19).

Several barriers contribute to this limited uptake. From a patient perspective, concerns regarding data security and privacy present significant obstacles, particularly for vulnerable demographics such as older adults or those with limited digital literacy (7). From a HCPs perspective, HCPs often lack both knowledge and experience when it comes to the use of DiGA for the treatment of mental disorders. A survey conducted in 2023 revealed that 65% of HCPs were unaware of the specific benefits of DiGA, and 45% reported difficulty navigating the technical processes required for prescribing these tools (20). Providing information on the benefits of DiGA and training in new technologies can enhance awareness and knowledge (20). This is of significant importance, as HCPs are often the first touchpoint for patients seeking information about digital treatment options due to their role as gatekeepers in the healthcare system (21). System-level barriers also play a critical role. DiGA are rarely embedded in routine care pathways and the existing benefits are often communicated as unclear at the outset (22).

Addressing these barriers is essential to improve the uptake of DiGA. Implementation science offers a structured approach to address factors hindering uptake of an innovation such as DiGA. Here, “implementation strategies” are defined as a range of approaches or techniques that can be employed to enhance the adoption, implementation, sustainability and scale-up of innovations (23). In the field of healthcare, implementation strategies frequently entail educational meetings, use of local opinion leaders, patient mediated interventions and a combination of multi-faceted strategies (24).

Given that healthcare systems have limited resources for active implementation, implementation science may assist in addressing this issue effectively. Implementation science uses frameworks to structure, guide, analyze, and evaluate implementation efforts (25). Implementation science provides a wide range of theories, frameworks and methods to evaluate implementation processes. For example, the Consolidated Framework for Implementation Research (CFIR) aims to predict or explain barriers and facilitators to foster implementation effectiveness and success (26). By applying this framework, researchers might analyze contexts to tailor fitting implementation strategies to or evaluate factors fostering implementation processes. Another well-known example of an implementation framework is RE-AIM. This framework evaluates health interventions across five dimensions: Reach (individuals affected), Effectiveness (impact), Adoption (setting-level uptake), Implementation (fidelity and cost), and Maintenance (sustainability). The RE-AIM framework focuses on both individual- and setting-level outcomes, making it a valuable tool for evaluating intervention success comprehensively (27). While allowing for a comprehensive evaluation of the implementation process, the RE-AIM framework mainly focuses on the effect of the intervention and its context.

Developed as a guide for intervention developers in the fields of engineering, statistics, biostatistics, and behavioral science, the Multiphase Optimization Strategy (MOST) framework (28) depicts an innovative approach in analyzing intervention effects. MOST is a framework that integrates various perspectives from engineering, statistics, biostatistics, and behavioral science to optimize interventions and evaluate them in RCTs. The approach comprises three phases: preparation, optimization, and evaluation. It is employed for the purpose of optimizing and evaluating intervention components and their combinations to achieve the best possible outcomes. It emphasizes efficiency, effectiveness and resource allocation by means of a systematic testing and refining of intervention components across multiple phases (28).

Commonly, effectiveness evaluations of implementation strategies target singular implementation strategies or are confronted with interactions of multi-faceted implementation confounding results on actual effects. Defining implementation strategies as an intervention done to increase the uptake of certain practices, such as DiGA, researchers might be able to use the MOST framework to investigate the effect of implementation strategies quantitatively. This process might be of interest as well-known implementation frameworks do not allow for a quantitative and direct comparison of the effects of specific implementation strategies and their combinations. In contrast to implementation frameworks such as CFIR or RE-AIM, MOST provides a structured approach for systematically optimizing intervention components. The MOST framework puts a distinctive emphasis on the systematic optimization of intervention components (here: implementation strategies) using quantitative methods, making it well-suited for the evaluation of interactions and combined effects of multiple strategies in complex implementation contexts, such as digital health. Furthermore, in comparison with the CFIR and RE-AIM frameworks, MOST enables direct measure of the effect of specific implementation strategies and their combinations, potentially maximizing effectiveness of implementation strategies. Furthermore, MOST places particular emphasis on the systematic optimization of intervention components. Using this feature, researchers might be able to systematically optimize implementation strategies and processes.

To investigate the feasibility of utilizing the MOST framework to analyze implementation strategies in real-world settings, this proof-of-concept study was conceptualized. A proof-of-concept study aims to determine the workability of an application. Unlike full-scale studies, a proof-of-concept study is not designed to provide comprehensive results or solutions. Instead, its purpose is to demonstrate that the core idea has potential and is worth further investigation. Based on the findings of a proof-of-concept study, decisions can be made on whether to invest additional resources in further developing the concept (29).

For this study, we decided to focus on the first two phases of the MOST framework to align with the study's proof-of-concept nature. For this purpose, the preparation phase will focus on identifying and selecting potential implementation strategies. The optimization phase will systematically test combinations of identified implementation strategies to determine if it is feasible to use MOST in investigating the effect of implementation strategies in increasing DiGA activation numbers.

MOST's evaluation phase, which typically involves conducting a RCT to confirm the efficacy of the optimized intervention, will not be included in this study as the study's primary objective is to explore the feasibility of using the MOST framework to assess how effective implementation strategies can be for increasing DiGA activations. Conducting an RCT at this stage will be beyond the scope of this exploratory research and would require additional resources, planning and data collection.

Given the high potential but low uptake of DiGA as of yet it is important to explore how implementation strategies could be used to enhance DiGA uptake. Therefore, the objective of this proof-of-concept study is to demonstrate the feasibility of employing the MOST framework for assessing effects of implementation strategies for increasing DiGA activations.

An improved understanding of whether the MOST framework can be used to investigate the impact of implementation strategies on the number of DiGA activations will assist in the optimization of these strategies. This in turn could enable DiGA providers to optimize their efforts to engage with HCPs and improve their digital health interventions, ultimately leading to an improved uptake of DiGA.

This proof-of-concept study aimed to assess the feasibility of using the MOST framework (28) to evaluate effects of implementation strategies for increasing DiGA activations. To demonstrate the feasibility of the MOST framework, we apply its first two parts using the preparation and optimization phases to investigate if the framework might be able to assess the effects of implementation strategies aimed at increasing DiGA activations. In the preparation phase, researchers identify and select intervention components (here: different implementation strategies) based on theoretical and empirical considerations. In the Optimization phase researchers test various configurations of these components to determine the most effective combination.

This study is a proof-of-concept designed to demonstrate the application of the first two phases of the MOST framework to evaluate if the model can be used to assess the effects of implementation strategies for increasing DiGA activations through an exploratory retrospective factorial design.

In this study, we will consider the implementation of DIGA as the “intervention” and “implementation strategies” as “intervention components” in the MOST framework. DiGA activation numbers served as the intervention outcome of interest. Hereafter, the term “activation numbers” will be used to refer to the uptake or number of DiGA users, as the actual use of the DiGA is defined by the activation of the prescription.

In the preparation phase of the MOST framework, we developed a logic model as a structured framework for activities, outputs, and outcomes to identify and select implementation strategies. In the optimization phase, we employed an exploratory retrospective factorial design to systematically test different combinations of implementation strategies (28).

Logic models are graphic tools that support design, planning and evaluation by visually organizing information and clarifying complex relationships. They describe planned actions and their expected results (30). The logic model of this study (Figure 1) depicts the activities that were undertaken to improve the DiGA activation numbers. These activities included implementation strategies such as calls, online-meetings, arranged and walk-in on-site-meetings. The implementation strategies were applied by HelloBetter employees. All calls and meetings were tailored to the HCP's specific interests. The focus of the calls and meeting was first, to introduce the HelloBetter DiGA and explain the prescription process of DiGA in general. Subsequently, the emphasis in the calls and meetings shifted to patient feedback, updates from HelloBetter, and the option of therapy progress reports. Generally, the objective was to provide comprehensive information to the HCPs and to inform about the benefits of HelloBetter DiGA.

Figure 1. Logic model developed during the preparation phase of the MOST framework. The figure illustrates the logic model developed during the preparation phase of the MOST framework. Activities represent the implementation strategies employed (e.g., calls, online meetings, arranged on-site meetings, walk-in on-site meetings). Outputs refer to the number of strategies applied. Outcomes are categorized as short-term (increased awareness and understanding of DiGA among HCPs) and intermediate (behavioral change of HCPs). The long-term outcome is the number of DiGA activations.

The outcome described in the logic model was the number of distinct implementation strategies carried out. In the short term, we expected these strategies to increase HCPs awareness and understanding of DiGA. In the intermediate term, we anticipated that the strategies would change attitudes, subjective norms, and perceived behavioral control among HCPs, ultimately leading to more DiGA prescriptions. In the long term, we estimated to achieve an increased number of activations of DiGA.

In the optimization phase of our study, we employed a 24 exploratory retrospective factorial design, exploring four factors (e.g., calls, online-meetings, arranged and walk-in on-site-meetings). Each strategy was either present (implemented) or absent (not implemented) for one individual HCP (data point), resulting in 16 unique experimental conditions (Table 1). These conditions allowed for the systematic examination of both the individual and combined associations of the strategies with the primary outcome. For example, one HCP received only phone calls and arranged on-site meetings, while another HCP received a combination of all four strategies.

Each factor in a factorial design has its own set of controls and subjects (here: HCPs) can simultaneously serve as controls for some factors and treatments for others (28). The outcome of this research was the number of prescribed DiGA, operationalized by the DiGA activations registered on the therapy platform.

The retrospective nature of the design involved analyzing pre-existing data from HelloBetter's implementation efforts, where these strategies were applied in various combinations across HCPs. HCPs were not randomized into a specific condition. Instead, implementation strategies were applied randomly to the individual HCP. The retrospective approach allowed the study to utilize real-world data to explore the feasibility of applying the MOST framework without requiring new data collection.

The data analyzed in this study was provided by HelloBetter, one of Germany's leading providers of DiGA. HelloBetter's digital therapy programs are available either online or via a mobile application and cover a wide variety of digital mental health interventions for the treatment and prevention of common conditions, such as stress and burnout, panic disorder or chronic pain.

HelloBetter collects data routinely while collaborating with HCPs working in various healthcare institutions, including clinics and private practices across Germany. Furthermore, the company documents their implementation strategies when engaging with HCPs. These routinely collected data were used for this study.

HCPs work in organizations such as private practices or clinics across Germany and could potentially prescribe a DiGA. For this study, we focused on information on the group of HCPs who are medical doctors or psychotherapists and have recently been in contact with HelloBetter or prescribed a HelloBetter DiGA in the past. The study involved HCPs from a variety of practice settings across Germany. While the specific characteristics of these settings (e.g., urban or rural, practice size) were not the primary focus of this study, the application of the MOST framework allows for future investigations to explore how contextual factors influence the effectiveness of implementation strategies.

A post-hoc power analysis was conducted to determine the sample size required for a 24 factorial design, which includes 16 experimental groups. The analysis considered an expected small effect size (Cohen's f = 0.10), as suggested by the literature on implementation strategies (31). An alpha level of 0.05 and a power of 0.80 were used as parameters for the analysis. The power analysis indicated a required total sample size of N = 1,904, with a sample size of n = 119 per group, to ensure sufficient power.

We analyzed existing data from January 14, 2022 to June 19, 2024, routinely collected by HelloBetter from several healthcare institutions across Germany. Participants regularly share information on their prescriber with HelloBetter to help with the communication in the prescription process. This information was used in this study to conduct a combined analysis on implementation strategies. Implementation strategies and activation numbers are routinely documented by HelloBetter and used for analysis in this study.

Following the framework recommended by Proctor et al. (32), the implementation strategies utilized in this study were designed to enhance the adoption and integration of DiGA by HCPs. These strategies included phone calls, online meetings, and both arranged and walk-in on-site meetings. All strategies were implemented by trained HelloBetter employees and tailored to meet the preferences and specific needs of HCPs. The tailoring of strategies aligns with Proctor's emphasis on adapting implementation approaches to the target audience to maximize effectiveness.

Phone calls provided information about DiGA and HelloBetter programs, offered opportunities for HCPs to ask questions, and included the option to schedule follow-up online or on-site meetings. Online meetings covered the same content but also included product demonstrations for enhanced engagement and understanding, aligning with Proctor's focus on training and technical assistance. On-site meetings, whether arranged or spontaneous, allowed for face-to-face interaction in HCP practice settings and provided the added advantage of leaving physical informational materials directly at the practice. All interactions began with employees inquiring about the HCP's individual needs, ensuring that the content was relevant and practical, as Proctor's framework emphasizes personalization and responsiveness to stakeholder priorities.

A notable distinction among these strategies was the level of planning required. Online and arranged on-site meetings were always pre-scheduled, allowing for detailed preparation, while walk-in meetings were spontaneous, enabling dynamic engagement. Phone calls offered flexibility, being either pre-scheduled or spontaneous based on the situation. This multifaceted approach reflects Proctor's recommendation to balance structured and adaptable strategies, ensuring effective and context-specific implementation efforts.

HelloBetter employees incorporated detailed information about DiGA to inform and engage HCPs during the implementation process. Specifically, this included an overview of DiGA programs offered by HelloBetter, their intended use cases (e.g., treatment of mental health conditions such as stress and anxiety), and their clinical benefits as demonstrated in RCTs [e.g., (33–35)]. Additional details included eligibility criteria for patients, the process for prescribing DiGA, and practical considerations such as data privacy and reimbursement. This information was integrated into the various implementation strategies to ensure that HCPs could fully understand and adopt these tools.

The data used in this study included HCP gender, profession, discipline as well as the activation numbers of HelloBetter DiGA. Furthermore, HelloBetter documents various implementation strategies, including in-person implementation strategies such as calls, online-meetings, arranged and walk-in on-site-meetings.

The primary aim of this study was to evaluate the feasibility of using the MOST framework to assess implementation strategies for increasing the number of DiGA activations. The secondary aim focused on examining the association between different implementation strategies—such as calls, online meetings, arranged, and walk-in on-site meetings—and the number of DiGA activations using the MOST framework. The number of DiGA activations was linked to HCPs having received different implementation strategies. Additionally, we sought to evaluate the number of DiGA activations in relation to the use of a combination of multiple implementation strategies and to explore the number of DiGA activations in relation to the total number of implementation strategies employed.

We applied a retrospective factorial design representing the second step of the procedure of the MOST framework (28). The assumption of homoscedasticity was not met in our data, as indicated by Levene's test (p < .001), and the assumption of normality was not met, as indicated by the Anderson–Darling normality test (p < .001) on both the raw and Box-Cox transformed data. Therefore, the initially planned factorial ANOVA could not be carried out.

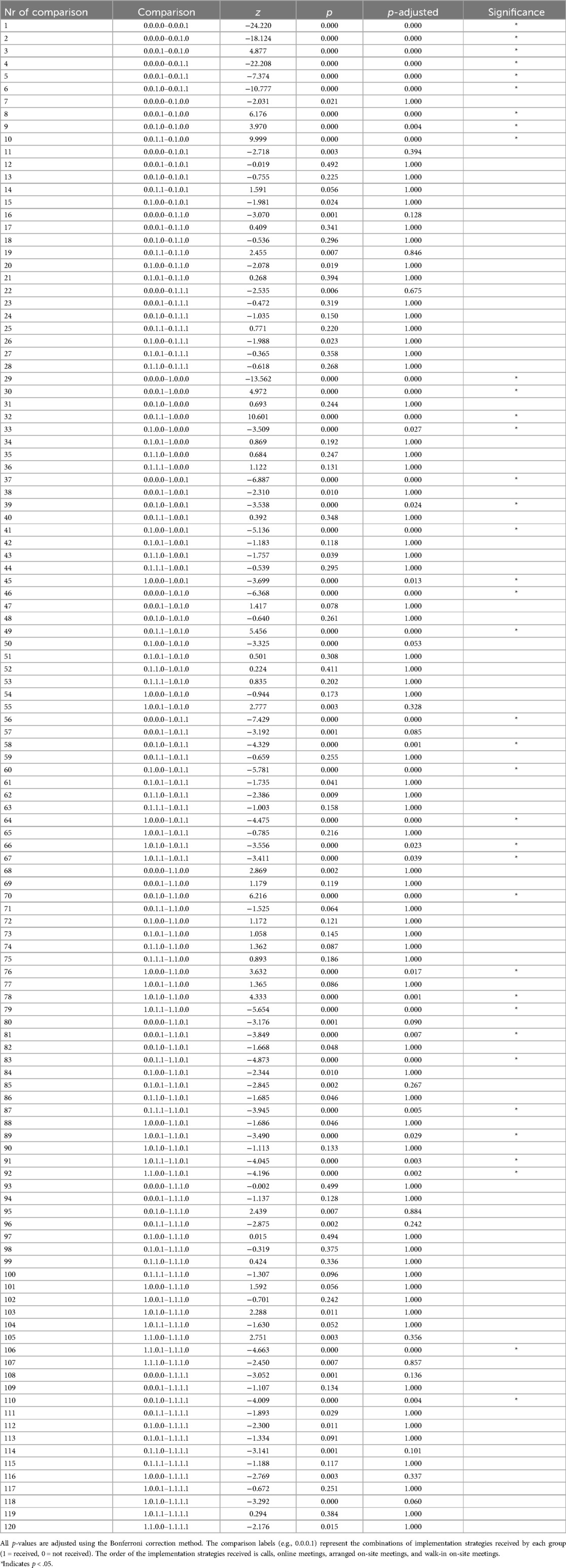

In light of the aforementioned violation of the assumptions, we applied the non-parametric Kruskal–Wallis rank test, which is more robust to different group sizes. A post-hoc analysis, namely Dunn's test with Bonferroni correction as recommended by (36), was performed to identify which specific groups differed from one another. In the Dunn's test, the order of the implementation strategies received by the groups is as follows: calls, online meetings, arranged on-site meetings, and walk-in on-site meetings. A “0” indicates that the specific implementation strategy was not received. The z-score in the Dunn's test indicates the magnitude and direction of the differences between groups. A positive z-score indicates that the first group in the comparison has higher activation numbers than the second group. Conversely, a negative z-score indicates that the first group in the comparison has lower activation numbers than the second group. Mean ranks were calculated to gain insight into which groups exhibited higher or lower values relative to the other groups.

In accordance with open science practices, this study was pre-registered on 12 June 2024 on the Open Science Framework (OSF) and can be accessed via https://osf.io/ugm2x.

The study does not analyze any personal or sensitive data. The data available for this study was fully anonymized.

Within this study, the application of the first two phases of MOST framework proved feasible within the constraints of existing data, demonstrating its adaptability to non-randomized settings and varying group sizes.

Results indicate the feasibility of applying the MOST framework to evaluate effects of implementation strategies for increasing DiGA activations in real-world settings. By employing a retrospective factorial design, we systematically analyzed individual and combined implementation strategies, revealing effects of singular implementation activities and their combinations. Detailed results allow for the comparison of effects of implementation strategies. Using a factorial design, we were able to assess correlations between strategies and the number of DiGA activations. By conceptualizing DiGA implementation as an “intervention” and the various implementation strategies as “intervention components,” the study effectively tested the applicability of the MOST framework in this context.

The logic model (Figure 1) developed during the preparation phase provided a structured framework for systematically identifying four strategies: calls, online meetings, arranged and walk-in on-site meetings. While specific outcomes directly related to the short-term and intermediate outcomes were beyond the scope of this study, it served as a tool to align the implementation strategies with the study's long-term outcomes of increasing DiGA activation numbers.

Data on a total number of N = 24,817 HCPs, medical doctors and psychotherapists, were included in the analysis. As mentioned above, the power analysis required a total sample size of N = 1,904, with a sample size of n = 119 per group, to ensure sufficient power. However, due to limitations of working with existing data, the final group sample sizes did not meet the required sample sizes as the HCPs were not equally distributed across groups. Table 1 presents the sample sizes for the specific groups in the factorial design. As the required sample sizes were not met, the design was underpowered.

The interquartile range (IQR) values for the number of activations exhibited considerable variation across different groups (Table 1). For example, Group 15 has the largest IQR of 9.00, indicating substantial variability in the middle 50% of its data, while Group 10 has the smallest IQR of 1.00, indicating less variability.

Median values also varied, with groups like Group 5 (arranged on-site meeting) showing a median of 0.0, indicating no activations for at least half of the participants. Conversely, groups like Group 13, Group 14, and Group 15 had medians of 2.0, indicating that at least half of the participants had two or more activations (Table 1).

Mean ranks were calculated to gain insight into which groups exhibited higher or lower values relative to the other groups (Table 1). For instance, Group 3 (online meetings) had a low mean rank of 12,915.57, indicating lower activations compared to other groups. Group 5 (arranged on-site meetings) and Group 7 (online meetings and arranged on-site meetings) had moderate mean ranks, reflecting moderate activations. Group 12 (calls, online meetings, and walk-in on-site meetings) had the highest mean rank of 22,238.60, indicating the highest activations among the groups. The mean ranks of all groups can be found in Table 1.

The sample displayed a gender distribution with 57.97% female HCPs (n = 14,269). The sample consisted primarily of medical doctors with 84.43% (n = 20,954), while psychotherapists made up 15.57% of the sample. The disciplines were diverse, with the most prevalent being general medicine (23.83%), psychiatry and psychotherapy (17.06%), neurology, psychiatry, and psychotherapy (12.13%), and internal medicine (9.68%). Further descriptive information can be found in Table 2.

The results indicated significant differences between the groups (e.g., No Intervention, Online only, Call only, Walk-in only, Call + Online, Call + Walk-in, Online + Walk-in, etc.), χ2 (15) = 1,665.2, p < .001. An epsilon-squared (ε2) value of 0.07 suggests a medium effect size.

Given that the Kruskal–Wallis test was statistically significant, post hoc pairwise comparisons using Dunn's test with Bonferroni correction revealed several statistically significant differences between the activation numbers of various groups. Table 3 illustrates the results of pairwise comparisons between experimental groups, highlighting Z-scores and adjusted p-values for each comparison. A comparison of groups that received any strategies with those that received no strategies revealed that the former exhibited consistently higher numbers of activations. For instance, comparisons involving the “No Intervention” group (0.0.0.0) consistently show large Z-scores and highly significant p-values when contrasted with groups receiving individual strategies or combined approaches. Comparison 1 in Table 3 demonstrates that the first group in the comparison (No Intervention) has a lower mean rank (fewer activations) than the second group (Walk-In Only) (Z-value = −24.22, p < .001, χ2 = 1,665.24).

Table 3. Pairwise comparisons (Bonferroni-adjusted P-values) with Z-values of implementation strategies.

Furthermore, groups that received the combination of arranged and walk-in on-site meetings reported higher activation numbers than other groups that received any other strategy or a combination of strategies (Table 3, e.g., comparison 5: 0.0.0.1–0.0.1.1, Z-value = −7.37, p < .001, χ2 = 1,665.24).

The group receiving walk-in on-site meetings reported higher activation numbers compared to the group receiving arranged on-site meetings (Table 3, e.g., comparison 3: 0.0.0.1–0.0.1.0, Z-value = 4.88, p < .001, χ2 = 1,665.24) and compared to the group receiving online meetings (Table 3, e.g., comparison 8: 0.0.0.1–0.1.0.0, Z-value = 6.18, p < .001, χ2 = 1,665.24).

The relationship between the total number of employed strategies and the number of activations was analyzed. The correlation analysis showed a small to moderate positive linear relationship between the number of strategies employed and the number of activations (r = 0.30, p < .001). For each additional strategy employed, the associated number of activations increases by approximately 0.997. However, the model explains only approximately 8.87% of the variance in the number of activations. A simple linear regression was conducted to examine the predictive relationship between the total number of strategies and the number of activations. The regression model was statistically significant F (1, 24,815) = 2,415, p < .001.

This proof-of-concept study demonstrates the feasibility of applying the MOST framework to evaluate the effects of implementation strategies for increasing DiGA activations in real-world settings. A retrospective factorial design was utilized, enabling the systematic analysis of individual and combined implementation strategies. The identification of significant differences between groups, as well as the identification of combinations such as arranged and walk-in on-site meetings, illustrate the practical utility of the framework for optimizing implementation efforts. The results of this study underscore the potential of the MOST framework as a structured and efficient approach for addressing complex implementation challenges in digital health interventions.

As a methodological framework designed to optimize the development and evaluation of behavioral and psychosocial interventions, the MOST framework (28) is particularly useful for identifying the most effective combination of intervention components (here: implementation strategies).

In this study, we tested if the structure of the first two phases of the MOST framework can be applied when evaluating implementation strategies. For this purpose, we treated the implementation of digital health as the “intervention”, and specific “implementation strategies” as the “intervention components” according to MOST. We developed a logic model for DiGA implementation and conducted a retrospective factorial trial on the implementation strategies with the number of DiGA activations as the outcome.

The main goal of this study was to show the feasibility of a MOST trial using implementation strategies as components to inform future research projects, for example a full MOST trial including original data. We found that implementation strategies could be used as components in a MOST trial and that the conduct of a retrospective factorial experiment can lead to a deeper understanding of specific dependencies of implementation strategies or their combination for fostering the uptake of digital health applications.

As a secondary outcome, we used existing data to demonstrate what outcomes of a MOST trial to investigate implementation strategies would look like, and indicate the effect of implementation strategies such as calls, online-meetings, arranged and walk-in on-site-meetings on DiGA activation numbers. Groups of HCPs that received any implementation strategy demonstrated higher numbers of DiGA activations in comparison to groups that did not receive any implementation strategies. Notably, groups of HCPs receiving a combination of arranged and walk-in on-site meetings showed higher activation numbers compared to those receiving other strategies. This indicates that a multi-faceted approach with face-to-face engagement, whether scheduled or walk-in, may be beneficial in increasing the number of DiGA activations. These findings are consistent with those of previous studies, including a systematic review indicating that multi-faceted implementation strategies are more effective than uni-faceted strategies (24).

The moderate positive correlation between the total number of strategies employed and the total number of activations suggests that while employing more strategies can lead to increased activations, this relationship alone has limited explanatory power. Contrary to our findings, the literature points in a different direction. A systematic review examining the effectiveness of implementation strategies among HCPs in a cancer care context found no significant association between the number of strategies employed and behavioral changes among HCPs (37). This result might be due to limitations in our study setup. Addressing the behavioral change of HCPs might require a more targeted approach.

Although this study effectively employed the MOST framework to assess implementation strategies, several constraints affected the framework's comprehensive utility and effectiveness, particularly with regard to data structure and methodological limitations. A significant limitation of this proof-of-concept study is the potential feedback loop between the dependent variable (DiGA activations) and the independent variable (implementation strategies). The differing approaches employed on HCPs who already prescribe more DiGA vs. those who do not, may influence the implementation strategies used. This creates a bias, as HCPs inclined to prescribe DiGA might receive different and/or more intensive strategies, potentially skewing the results. Thus, it is challenging to determine if the increase in DiGA activations is due to the strategies or pre-existing tendencies of the HCPs.

HelloBetter documents various implementation strategies, including calls, emails, faxes, meetings, postal mails, letters, test accounts, flyers, webinars, congresses, and campaigns. For this study, we focused specifically on in-person implementation strategies such as calls, online meetings, arranged and walk-in on-site meetings. This limited scope might not fully represent the broader implementation efforts employed by HelloBetter.

Additionally, we did not focus on a specific time frame while evaluating implementation strategies, which is crucial as the employed strategies underwent modifications over the course of the data collection period. This results in difficulties in attributing changes in DiGA activation numbers to specific strategies or time periods, as the evolving nature of the strategies introduces variability and potential confounding factors in the results. The retrospective factorial design also presents limitations, including the inability to control for confounding variables or establish causality. Participants were not randomly assigned to the groups, which may introduce biases. Additionally, the order of implementation activities was not counterbalanced, which might potentially lead to order effects. Furthermore, the non-parametric and underpowered analyses compromise the robustness and generalizability of the findings.

The presence of such biases introduces complications into the optimization process within the MOST framework, as it becomes challenging to isolate the effects of the implementation strategies from the influence of the pre-existing behaviors of the HCPs. The presence of feedback loops, the absence of a defined time frame and the absence of randomization make it challenging to establish clear causal relationships between the implementation strategies and the observed outcomes. This, in turn, constrains the MOST framework's capacity to accurately assess the effectiveness of the strategies and further constrains the framework's capacity to conduct a comprehensive and reliable optimization process.

For this study, we had no access to prescription numbers of DiGA, only to the activation numbers. This means that there might be a number of prescriptions issued by the HCPs that did not turn into an activation by the patient. Therefore, HCPs might have prescribed more DiGA than we assess in this study. Still, there are advantages to focusing on activation numbers rather than prescription numbers. The process allows for the evaluation of the practical adoption of DiGA by end-users. While prescriptions depict direct HCP behavior and are an important intermediate metric, they do not necessarily reflect whether patients engage with and activate the prescribed DiGA, which should be the ultimate health benefit outcome. Previous research has shown that a substantial proportion of prescribed DiGA are never activated, highlighting a key gap between prescription and actual utilization [e.g., (19)]. For example, a HCP might issue a high number of DiGA prescriptions, but to an unfit group of patients, which would in turn not activate their DiGA. Or, a HCP might be unable to explain the benefit of the DiGA prescription to the patient, resulting in a non-activation. Such prescriptions would then also not depict implementation success. Therefore, activation numbers provide a more direct measure of DiGA uptake and use, which are critical indicators of the intervention's success in real-world settings. Future analyses could benefit from examining both prescription and activation numbers as well as why not all prescriptions are turned into DiGA activations to gain a more comprehensive understanding of HCP and DiGA user behavior. In the context of the MOST framework, the distinction between prescriptions and activations is of critical importance, as the framework is designed to optimize and evaluate the most effective components of an intervention. The consideration of both metrics would facilitate a more nuanced analysis of the influence of implementation strategies on both HCP behavior (prescriptions) and end-user engagement (activations).

Despite its limitations, this study offers valuable insights into digital mental health implementation science. The findings of our study indicate that the MOST framework might be an appropriate tool for the assessment of implementation strategies. However, the results also highlight the necessity of integrating frameworks such as MOST into the planning and optimization phase of strategies before implementing them.

The findings of this study offer several actionable insights for researchers and implementers working on the investigation and improvement of the implementation of digital interventions. This study implies a method to critically investigate implementation activities in routine care settings. The outcome of such research would be quantifiable knowledge on the effects of specific implementation strategies. Specifically digital healthcare providers, such as DiGA providers, could critically investigate and then adapt their implementation strategies based on tangible measures. Implementation can be costly, failed implementation even more so. It has been reported that the uptake of health care innovations into routine care takes significant time and effort (38). Speeding up the process of integrating a health care innovation, such as DiGA, into routine care might be crucial to its success and sustainability. We assume that too often, implementers use an “it seems like a good idea” approach for choosing and applying implementation strategies, without strategically evaluating their success. Applying the MOST framework to assess the effect of specific implementation strategies might improve the effectiveness of implementation endeavors, such as integrating DiGA into German healthcare. To leverage these insights, healthcare systems should prioritize integrating the evaluation and optimization of implementation strategies into routine workflows, ensuring sufficient training and resources for implementation staff. Policymakers can support this by providing incentives for HCPs to participate in such strategies, such as certification for training.

While this proof-of-concept study gives an indication that it is possible to conduct a MOST trial to systematically assess the effects of specific implementation strategies using existing data, future research should run a full MOST trial with original data to assess the effect of implementation strategies. Within the preparation phase, researchers should evaluate all ongoing and planned implementation activities as well as their assumed effects. In the best case scenario, this phase would start before the actual implementation of an innovation, so that all implementation activities could be purposefully selected. If implementation activities are already ongoing, researchers could use specific techniques such as stakeholder involvement to find consensus on the most promising implementation strategies for their context. Either way, this process should include a full implementation evaluation, for example using the CFIR framework (26), to evaluate the context as well as barriers and facilitators of the implementation. Then, implementation strategies should be tailored to these findings.

After identifying implementation strategies, components of such to test (for example the digital vs. face-to-face delivery of the strategy) or multi-faceted sets of strategies, researchers would conduct the factorial experiment. They could also choose alternative approaches to investigate strategy effectiveness, such as fractional factorial experiments, sequential, multiple assignment, randomized trials (SMARTs), micro-randomized trials, system identification, or other (28). Researchers would collect data in the specific context, randomly assigning HCPs to a group receiving a defined set of implementation strategies (or none). Running this factorial implementation experiment would allow for the investigation of effects of implementation strategies and their combinations. By conducting this trial, most of the above mentioned limitations could be overcome. The trial could be powered accordingly and groups for the factorial would be set. To refer to our example, groups of HCPs would be randomized to the conditions of the factorial design and these HCPs would then only receive these specific numbers of strategies. This procedure would then allow for a less biased assessment of specific strategies.

After this optimization phase, in a full MOST trial the researcher proceeds to the evaluation phase of MOST. This phase involves testing effectiveness through a RCT to determine whether the optimized implementation produces show a statistically and clinically significant effect, measured as the difference between the optimized implementation intervention and control groups (e.g., “implementation-as-usual”). If the RCT confirms the implementation intervention's effectiveness, it can be prepared for roll-out in the intended setting.

Limitations of this procedure include the limitation of the number of components, confounding factors for such a trial and the high costs of such a trial. Naturally, a high number of implementation activities are carried out at once when implementing digital interventions. Researchers investigating the effects of specific implementation strategies would need to carefully consider which activities constitute as implementation strategies to be investigated and how many components they would want to include in their factorial design. Likewise, they would have to be aware of the confounding effects of all implementation strategies going at the implementation site, not being considered implementation activities within the factorial design. Lastly, we assume that such a MOST trial for the assessment of the effectiveness of implementation strategies for digital interventions will be quite costly. There will be direct costs because of big sample sizes due to the low expected effect size for separate implementation strategies. Indirect costs might include lower activation numbers in those conditions of the factorial design with less effective strategies and groups receiving no strategies for the time of the trial. Naturally, implementers might use the “the more the better” method, applying multiple implementation strategies at once and seeing an effect of those strategies. This might lead to short-term success of this “blanket approach”, but might be more costly in the long-term than investing in the investigation of the most successful implementation strategies. Nonetheless, the risk of conducting a factorial design and applying (a group of) less effective implementation strategies for a group of HCPs might make implementers and companies hesitant to invest in such a trial.

Lastly, implementers would have to decide if they wanted to conduct the third phase of the MOST framework and would evaluate the optimized intervention through a randomized controlled trial (RCT) or another rigorous study design. By doing this, implementers could confirm the efficacy and effectiveness of the implementation intervention (the single or combination of implementation strategies) as a whole, ensuring that the chosen configuration of strategies (components) is effective in practice. Such investigations into implementation strategies have been very rare and would also foster the field of implementation science.

For the example of DiGA implementation, running a full MOST trial would enable DiGA implementers and providers to understand the working mechanisms of DiGA implementation further. For now, much focus has been placed on the identification and discussion of implementation barriers. Overcoming those barriers has mainly resulted in classical marketing and sales activities. Utilizing the knowledge of the field of implementation science and then systematically investigating the effectiveness of specific implementation strategies might overcome barriers to DiGA implementation and foster the uptake of those interventions in routine care.

In conclusion, this proof-of-concept study demonstrates the feasibility of applying the MOST framework to evaluate effects implementation strategies for increasing DiGA activations in real-world settings. By employing the preparation and optimization phases of the MOST framework, this study highlights its potential as a structured approach for systematically evaluating and optimizing implementation efforts. The application of the framework enabled the systematic comparison of multiple strategies, providing actionable insights into their relative effectiveness.

While this study focuses on the feasibility of using the MOST framework rather than delivering definitive conclusions on implementation strategy effectiveness, the findings suggest that the framework can serve as a valuable tool for digital health providers and policymakers. Future research should build upon this work by conducting fully powered trials to further validate the framework's applicability and optimize implementation strategies, ultimately supporting broader adoption of digital health interventions and improving access to mental health care for a broader population.

The datasets presented in this article are not readily available because they belong to the company HelloBetter. Access to the data requires a formal request to be made directly to HelloBetter. Requests to access the datasets should be directed to kontakt@hellobetter.de.

AA: Conceptualization, Formal Analysis, Investigation, Methodology, Project administration, Visualization, Writing – original draft, Writing – review & editing. WB: Supervision, Writing – review & editing. IC: Writing – review & editing. AE: Conceptualization, Methodology, Project administration, Supervision, Validation, Writing – review & editing.

The author(s) declare that no financial support was received for the research, authorship, and/or publication of this article.

We would like to thank HelloBetter for providing the data that made this project possible. We also extend our appreciation to Dr. Sümeyye Balci for her valuable feedback.

AE was employed by GET.ON Institut für Online Gesundheitstrainings GmbH.

The remaining authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

The author(s) declare that no Generative AI was used in the creation of this manuscript.

All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors and the reviewers. Any product that may be evaluated in this article, or claim that may be made by its manufacturer, is not guaranteed or endorsed by the publisher.

1. Cuijpers P, Sijbrandij M, Koole SL, Andersson G, Beekman AT, Reynolds CF. The efficacy of psychotherapy and pharmacotherapy in treating depressive and anxiety disorders: a meta-analysis of direct comparisons. World Psychiatry. (2013) 12(2):137–48. doi: 10.1002/wps.20038

2. Cuijpers P, Donker T, Weissman MM, Ravitz P, Cristea IA. Interpersonal psychotherapy for mental health problems: a comprehensive meta-analysis. Am J Psychiatry. (2016) 173(7):680–7. doi: 10.1176/appi.ajp.2015.15091141

3. Carpenter JK, Andrews LA, Witcraft SM, Powers MB, Smits JAJ, Hofmann SG. Cognitive behavioral therapy for anxiety and related disorders: a meta-analysis of randomized placebo-controlled trials. Depress Anxiety. (2018) 35(6):502–14. doi: 10.1002/da.22728

4. Jorm AF, Patten SB, Brugha TS, Mojtabai R. Has increased provision of treatment reduced the prevalence of common mental disorders? Review of the evidence from four countries. World Psychiatry. (2017) 16(1):90–9. doi: 10.1002/wps.20388

5. Moitra M, Santomauro D, Collins PY, Vos T, Whiteford H, Saxena S, et al. The global gap in treatment coverage for major depressive disorder in 84 countries from 2000 to 2019: a systematic review and Bayesian meta-regression analysis. PLoS Med. (2022) 19(2):e1003901. doi: 10.1371/journal.pmed.1003901

6. Arias D, Saxena S, Verguet S. Quantifying the global burden of mental disorders and their economic value. eClinicalMedicine. (2022) 54:101675. doi: 10.1016/j.eclinm.2022.101675

7. Borghouts J, Eikey E, Mark G, De Leon C, Schueller SM, Schneider M, et al. Barriers to and facilitators of user engagement with digital mental health interventions: systematic review. J Med Internet Res. (2021) 23(3):e24387. doi: 10.2196/24387

8. Ebert DD, Mortier P, Kaehlke F, Bruffaerts R, Baumeister H, Auerbach RP, et al. Barriers of mental health treatment utilization among first-year college students: first cross-national results from the WHO world mental health international college student initiative. Int J Methods Psychiatr Res. (2019) 28(2):e1782. doi: 10.1002/mpr.1782

9. Batterham PJ, Sunderland M, Calear AL, Davey CG, Christensen H, Teesson M, et al. Developing a roadmap for the translation of e-mental health services for depression. Aust N Z J Psychiatry. (2015) 49(9):776–84. doi: 10.1177/0004867415582054

10. Federal Ministry of Health. Driving the digital transformation of Germany’s healthcare system for the good of patients, Federal Ministry of Health (2020). Available online at: https://www.bundesgesundheitsministerium.de/en/digital-healthcare-act.html (accessed: 4 April 2024).

11. European Commision. CE marking, European Commision (2021). Available online at: https://single-market-economy.ec.europa.eu/single-market/ce-marking_en?prefLang=de (Accessed January 25, 2025).

12. Bundesministerium für Gesundheit. Digitale Gesundheitsanwendungen (DIGA), Bundesministerium für Gesundheit (2024). Available online at: https://www.bundesgesundheitsministerium.de/themen/krankenversicherung/online-ratgeber-krankenversicherung/arznei-heil-und-hilfsmittel/digitale-gesundheitsanwendungen (accessed: 4 April 2024).

13. gesund.bund.de. DiGA: Apps on prescription, gesund.bund.de (2021). Available online at: https://gesund.bund.de/en/digital-health-applications-diga (accessed: 27 June 2024).

14. HelloBetter. Stress und Burnout | Online-Kurs von HelloBetter, HelloBetter (2024). Available online at: https://hellobetter.de/online-kurse/burnout-stress/ (accessed: 27 June 2024).

15. Nobis S, Lehr D, Ebert DD, Baumeister H, Snoek F, Riper H, et al. Efficacy of a web-based intervention with mobile phone support in treating depressive symptoms in adults with type 1 and type 2 diabetes: a randomized controlled trial. Diabetes Care. (2015) 38(5):776–83. doi: 10.2337/dc14-1728

16. Behrendt D, Ebert DD, Spiegelhalder K, Lehr D. Efficacy of a self-help web-based recovery training in improving sleep in workers: randomized controlled trial in the general working population. J Med Internet Res. (2020) 22(1):e13346. doi: 10.2196/13346

17. Zarski A-C, Berking M, Ebert DD. Efficacy of internet-based treatment for genito-pelvic pain/penetration disorder: results of a randomized controlled trial. J Consult Clin Psychol. (2021) 89(11):909–24. doi: 10.1037/ccp0000665

18. Küchler A, Kählke F, Vollbrecht D, Peip K, Ebert DD, Baumeister H. Effectiveness, acceptability, and mechanisms of change of the internet-based intervention StudiCare mindfulness for college students: a randomized controlled trial. Mindfulness (N Y). (2022) 13(9):2140–54. doi: 10.1007/s12671-022-01949-w

19. Baas J, Techniker Krankenkasse. DIGA-Report II. In DiGA-Report II (2024). Available online at: https://www.tk.de/resource/blob/2170850/e7eaa59ecbc0488b415409d5d3a354cf/tk-diga-report-2-2024-data.pdf

20. Kerst A, Zielasek J, Gaebel W. ’Smartphone applications for depression: a systematic literature review and a survey of health care professionals’ attitudes towards their use in clinical practice’. Eur Arch Psychiatry Clin Neurosci. (2020) 270(2):139–52. doi: 10.1007/s00406-018-0974-3

21. Bundeszentrale Für Gesundheitliche Aufklärung (BZgA). Primäre Gesundheitsversorgung/Primary Health Care, Bundeszentrale Für Gesundheitliche Aufklärung (BZgA) (2023). Available online at: https://leitbegriffe.bzga.de/alphabetisches-verzeichnis/primaere-gesundheitsversorgung-primary-health-care/ (accessed: 24 June 2024).

22. GKV-Spitzenverband. Bericht des GKV-Spitzenverbandes über die Inanspruchnahme und Entwicklung der Versorgung mit Digitalen Gesundheitsanwendungen (DiGA-Bericht), GKV-Spitzenverband (2023). Available online at: https://www.gkv-spitzenverband.de/gkv_spitzenverband/presse/fokus/fokus_diga.jsp (accessed: 28 June 2024).

23. Curran GM, Bauer M, Mittman B, Pyne JM, Stetler C. Effectiveness-implementation hybrid designs: combining elements of clinical effectiveness and implementation research to enhance public health impact. Med Care. (2012) 50(3):217–26. doi: 10.1097/MLR.0b013e3182408812

24. Goorts K, Dizon J, Milanese S. The effectiveness of implementation strategies for promoting evidence informed interventions in allied healthcare: a systematic review. BMC Health Serv Res. (2021) 21(1):241. doi: 10.1186/s12913-021-06190-0

25. Moullin JC, Dickson KS, Stadnick NA, Albers B, Nilsen P, Broder-Fingert S, et al. Ten recommendations for using implementation frameworks in research and practice. Implement Sci Commun. (2020) 1(1):42. doi: 10.1186/s43058-020-00023-7

26. Damschroder LJ, Reardon CM, Widerquist MAO, Lowery J. The updated consolidated framework for implementation research based on user feedback. Implement Sci. (2022) 17(1):75. doi: 10.1186/s13012-022-01245-0

27. Glasgow RE, Vogt TM, Boles SM. Evaluating the public health impact of health promotion interventions: the RE-AIM framework. Am J Public Health. (1999) 89(9):1322–7. doi: 10.2105/AJPH.89.9.1322

28. Collins LM. Optimization of Behavioral, Biobehavioral, and Biomedical Interventions: The Multiphase Optimization Strategy (MOST). Cham: Springer International Publishing (Statistics for Social and Behavioral Sciences) (2018). doi: 10.1007/978-3-319-72206-1

29. Kendig CE. What is proof of concept research and how does it generate epistemic and ethical categories for future scientific practice? Sci Eng Ethics. (2016) 22(3):735–53. doi: 10.1007/s11948-015-9654-0

30. Knowlon LW, Philips CC. The Logic Model Guidebook: Better Strategies for Great Results. Thousand Oaks, CA: SAGE (2013). p. 2.

31. Baker R, Camosso-Stefinovic J, Gillies C, Shaw EJ, Cheater F, Flottorp S, et al. Tailored interventions to address determinants of practice. Cochrane Database Syst Rev. (2015) 2015(4):CD005470. doi: 10.1002/14651858.cd005470.pub3

32. Proctor EK, Powell BJ, McMillen JC. Implementation strategies: recommendations for specifying and reporting. Implement Sci. (2013) 8(1):139. doi: 10.1186/1748-5908-8-139

33. Heber E, Ebert DD, Lehr D, Cuijpers P, Berking M, Nobis S, et al. The benefit of web- and computer-based interventions for stress: a systematic review and meta-analysis. J Med Internet Res. (2017) 19(2):e32. doi: 10.2196/jmir.5774

34. Ebenfeld L, Lehr D, Ebert DD, Kleine Stegemann S, Riper H, Funk B, et al. Evaluating a hybrid web-based training program for panic disorder and agoraphobia: randomized controlled trial. J Med Internet Res. (2021) 23(3):e20829. doi: 10.2196/20829

35. Harrer M, Nixon P, Sprenger AA, Heber E, Boß L, Heckendorf H, et al. Are web-based stress management interventions effective as an indirect treatment for depression? An individual participant data meta-analysis of six randomised trials. BMJ Mental Health. (2024) 27(1):e300846. doi: 10.1136/bmjment-2023-300846

37. Tomasone JR, Kauffeldt KD, Chaudhary R, Brouwers MC. ‘Effectiveness of guideline dissemination and implementation strategies on health care professionals’ behaviour and patient outcomes in the cancer care context: a systematic review’. Implement Sci. (2020) 15(1):41. doi: 10.1186/s13012-020-0971-6

Keywords: DiGA, Digitale Gesundheitsanwendungen, digital health applications, implementation science, healthcare professionals, internet-based interventions, mental health, multiphase optimization strategy

Citation: Aydin A, van Ballegooijen W, Cornelisz I and Etzelmueller A (2025) Evaluating the feasibility of using the Multiphase Optimization Strategy framework to assess implementation strategies for digital mental health applications activations: a proof of concept study. Front. Digit. Health 7:1509415. doi: 10.3389/fdgth.2025.1509415

Received: 10 October 2024; Accepted: 28 January 2025;

Published: 13 February 2025.

Edited by:

Rosa M. Baños, University of Valencia, SpainReviewed by:

Mihaela Dinsoreanu, Technical University of Cluj-Napoca, RomaniaCopyright: © 2025 Aydin, van Ballegooijen, Cornelisz and Etzelmueller. This is an open-access article distributed under the terms of the Creative Commons Attribution License (CC BY). The use, distribution or reproduction in other forums is permitted, provided the original author(s) and the copyright owner(s) are credited and that the original publication in this journal is cited, in accordance with accepted academic practice. No use, distribution or reproduction is permitted which does not comply with these terms.

*Correspondence: Ayla Aydin, YXlsYS5zLmF5ZGluQGhvdG1haWwuY29t

Disclaimer: All claims expressed in this article are solely those of the authors and do not necessarily represent those of their affiliated organizations, or those of the publisher, the editors and the reviewers. Any product that may be evaluated in this article or claim that may be made by its manufacturer is not guaranteed or endorsed by the publisher.

Research integrity at Frontiers

Learn more about the work of our research integrity team to safeguard the quality of each article we publish.