95% of researchers rate our articles as excellent or good

Learn more about the work of our research integrity team to safeguard the quality of each article we publish.

Find out more

HYPOTHESIS AND THEORY article

Front. Hum. Neurosci. , 15 November 2016

Sec. Brain Health and Clinical Neuroscience

Volume 10 - 2016 | https://doi.org/10.3389/fnhum.2016.00550

This article is part of the Research Topic Mapping psychopathology with fMRI and effective connectivity analysis View all 12 articles

Klaas E. Stephan1,2,3*

Klaas E. Stephan1,2,3* Zina M. Manjaly1,4

Zina M. Manjaly1,4 Christoph D. Mathys2

Christoph D. Mathys2 Lilian A. E. Weber1

Lilian A. E. Weber1 Saee Paliwal1

Saee Paliwal1 Tim Gard1,5

Tim Gard1,5 Marc Tittgemeyer3

Marc Tittgemeyer3 Stephen M. Fleming2

Stephen M. Fleming2 Helene Haker1

Helene Haker1 Anil K. Seth6

Anil K. Seth6 Frederike H. Petzschner1

Frederike H. Petzschner1This paper outlines a hierarchical Bayesian framework for interoception, homeostatic/allostatic control, and meta-cognition that connects fatigue and depression to the experience of chronic dyshomeostasis. Specifically, viewing interoception as the inversion of a generative model of viscerosensory inputs allows for a formal definition of dyshomeostasis (as chronically enhanced surprise about bodily signals, or, equivalently, low evidence for the brain's model of bodily states) and allostasis (as a change in prior beliefs or predictions which define setpoints for homeostatic reflex arcs). Critically, we propose that the performance of interoceptive-allostatic circuitry is monitored by a metacognitive layer that updates beliefs about the brain's capacity to successfully regulate bodily states (allostatic self-efficacy). In this framework, fatigue and depression can be understood as sequential responses to the interoceptive experience of dyshomeostasis and the ensuing metacognitive diagnosis of low allostatic self-efficacy. While fatigue might represent an early response with adaptive value (cf. sickness behavior), the experience of chronic dyshomeostasis may trigger a generalized belief of low self-efficacy and lack of control (cf. learned helplessness), resulting in depression. This perspective implies alternative pathophysiological mechanisms that are reflected by differential abnormalities in the effective connectivity of circuits for interoception and allostasis. We discuss suitably extended models of effective connectivity that could distinguish these connectivity patterns in individual patients and may help inform differential diagnosis of fatigue and depression in the future.

Fatigue is a prominent symptom of major clinical significance, not only in chronic fatigue syndrome (CFS) per se, but across a wide range of immunological and endocrine disorders, cancer and neuropsychiatric diseases (for overviews, see Wessely, 2001; Chaudhuri and Behan, 2004; Dantzer et al., 2014). For example, it is the most frequent (Stuke et al., 2009) symptom in Multiple Sclerosis (MS), with major impact on quality of life. It is strongly associated with depression (Wessely et al., 1996; Bakshi et al., 2000; Kroencke et al., 2000; Pittion-Vouyovitch et al., 2006), and longitudinal studies have demonstrated that fatigue represents a risk factor for depression (and vice versa; Skapinakis et al., 2004). Additionally, fatigue represents a core criterion for the diagnosis of major depression in standard psychiatric classification schemes (ICD-10 and DSM-5).

The clinical concept of fatigue is a heterogeneous construct, comprising at least two dimensions (Kluger et al., 2013): fatiguability of cognitive and motor processes, and subjective perception of fatigue. While the former can be measured objectively, the latter requires self-report via questionnaires. Given its clinical importance, it has been remarkably difficult to develop a theory of fatigue that is comprehensive, specific and allows for developing objective clinical tests (Wessely, 2001). Research on its pathophysiology has largely focused on molecular processes, particularly in the context of inflammation (Dantzer et al., 2014; Patejdl et al., 2016), but efforts to link these molecular processes to the physiology and computation (information processing) of cerebral circuits are rare. This paper attempts to address this challenge and outlines the foundations of a theory of fatigue that is grounded in interoception (Craig, 2002) and homeostatic/allostatic control (Sterling, 2012), offering a formal (hierarchical Bayesian) perspective on (some of) the computations involved. In particular, we propose a metacognitive mechanism that explains the sequential occurrence of fatigue and depression, given a state of prolonged dyshomeostasis.

This paper has the following structure. First, we discuss why disease theories of fatigue confined to the molecular/cellular level are not sufficient for a comprehensive understanding of fatigue, but need to be complemented by a computational perspective. Second, as a basis for developing this perspective, we review long-standing notions from systems theory and control theory and their implications for interoception as well as homeostatic and allostatic control. Third, we apply a hierarchical Bayesian view to fatigue and cast it as a meta-cognitive phenomenon: a belief of failure at one's most fundamental task—homeostatic/allostatic regulation—which arises from experiencing enhanced interoceptive surprise. We suggest that fatigue is a (possibly adaptive) initial allostatic response to a state of interoceptive surprise; if dyshomeostasis continues, the belief of low allostatic self-efficacy and lack of control may pervade all domains of cognition and manifests as a generalized sense of helplessness, with depression as a consequence. Fourth, we derive specific predictions against which this theory can be tested and outline the necessary methodological extensions of contemporary models of effective connectivity, such as DCM. Finally, we consider how such extended generative models might become useful for differential diagnosis of fatigue in the future.

Existing pathophysiological theories of fatigue mainly refer to inflammatory and metabolic processes at the molecular level. For example, a longstanding observation is that pro-inflammatory cytokines, resulting from peripheral (extra-cerebral) immunological processes, induce “sickness behavior” (Dantzer and Kelley, 2007) with fatigue as a key symptom. This may result from a range of different mechanisms, including reduced synthesis of monoaminergic transmitters or inflammation-induced shifts in the production of metabolites such as kynurenines, which impact on transmission at glutamatergic synapses (for a comprehensive recent review, see Dantzer et al., 2014).

While these hypotheses have been very influential and useful in suggesting potential future treatment avenues, they do not, on their own, allow for constructing a comprehensive theory of fatigue. First, as for any neuropsychiatric symptom, we eventually need a theory that unifies and links disease processes across molecular, cellular and circuit (systems) levels of description. This is important because a theory of fatigue that is confined to the molecular level does not explain how clinical symptoms arise; by contrast, a circuit-level description is the closest we can presently get to behavior and subjective experience. Moreover, neuropsychiatric disease processes can not only originate from the molecular level and spread “bottom-up,” causing cellular and circuit-level disturbances; in addition, the reverse (top-down) direction and the ubiquitous existence of reciprocal brain-body interactions are well-established (Sapolsky, 2015). For example, seemingly maladaptive behavior can materialize as the (optimal) consequence of beliefs that form under exposure to specific environmental input statistics (Schwartenbeck et al., 2015b). That is, in the absence of any primary molecular or synaptic pathology, exposure to unusual environmental events can induce distorted beliefs about the causal structure of the world, e.g., that it is inherently unpredictable or uncontrollable (cf. learned helplessness; Abramson et al., 1978). Such beliefs engender misdirected coping behavior and have profound physiological consequences, including a dysregulation of cerebral control over endocrine and autonomic nervous system processes (e.g., aberrant activation of the hypothalamic-pituitary axis; HPA; Tsigos and Chrousos, 2002). Importantly, the ensuing immunological and metabolic disturbances in the body exert strong feedback effects on cerebral circuits. For example, stress-related increases in levels of cortisol and pro-inflammatory cytokines affect NMDA receptor (NMDAR) function (Nair and Bonneau, 2006; Gruol, 2015; Vezzani and Viviani, 2015). Importantly, NMDAR dependent signaling is thought to be essential for updating and encoding representations of beliefs (Corlett et al., 2010; Vinckier et al., 2016). This suggests that peripheral inflammatory or endocrine disturbances could impede the adjustment of aberrant beliefs by which they were caused in the first place (i.e., a positive feedback loop). In brief, the existence of closed-loop interactions between cognitive and bodily processes implies that we require a wider theory of fatigue than one focusing on molecular events alone.

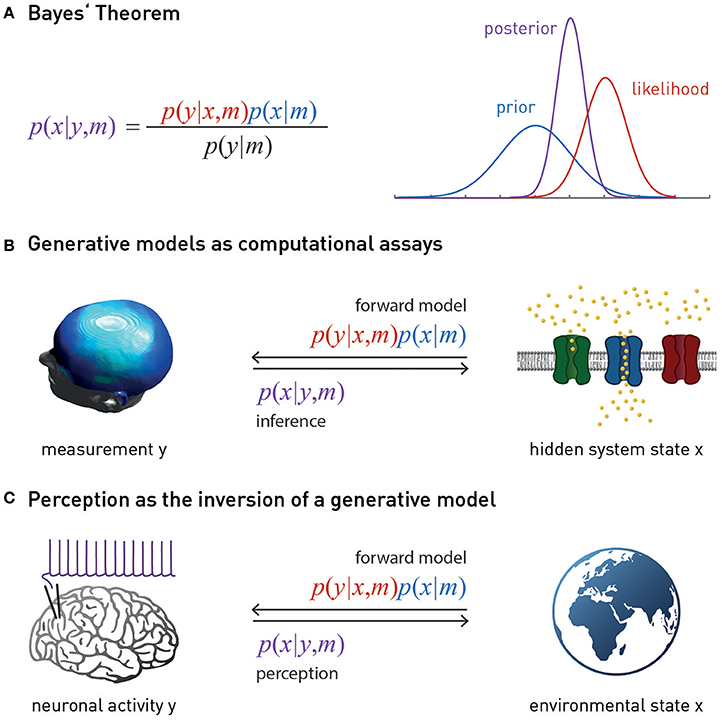

There is a second, more practical, reason why a purely molecular/cellular theory of fatigue cannot provide a clinically sufficient account of fatigue: molecular disease processes in brain tissue are not easily accessible for non-invasive diagnostics in humans. The relative separation of the brain from the body by the blood-brain barrier means that we only have indirect access to brain tissue, such as biochemical analyses of cerebrospinal fluid (CSF), and there are very few diagnostic questions (e.g., in neuro-oncology or epilepsy) where the risks of brain tissue biopsies or invasive recordings are justified by diagnostic benefits. However, provided we have a concrete model that specifies how a disease process at the molecular/cellular level leads to measurable changes in the activity of specific brain circuits, one can, in principle, infer the expression of this process from non-invasive neuroimaging and electrophysiological measurements, such as functional magnetic resonance imaging (fMRI) or magneto-/electroencephalography (M/EEG). Technically, this involves a so-called “generative model” m which specifies how the hidden (unobservable) state x of a neuronal circuit translates probabilistically into a measurement obtained y with fMRI or M/EEG, and which can be used to infer hidden states from measurements (Figure 1). Using a generative model of brain activity or behavioral measurements to address diagnostic questions amounts to a “computational assay” (Stephan and Mathys, 2014; Stephan et al., 2015). The application of generative models to clinical questions is presently beginning to take place across the whole range of neuropsychiatry, including applications to schizophrenia (Schlagenhauf et al., 2014), depression (Hyett et al., 2015), bipolar disorder (Breakspear et al., 2015), Parkinson's disease (Herz et al., 2014), channelopathies (Gilbert et al., 2016), or epilepsy (Cooray et al., 2015). One particular approach we return to below is the generative modeling of neurophysiological circuits. For example, dynamic causal models (DCMs; for reviews see Daunizeau et al., 2011; Friston et al., 2013) allow one to infer directed synaptic connections (effective connectivity) from neuroimaging or electrophysiological data.

Figure 1. (A) Bayes theorem provides the foundation for a generative model m. This combines the likelihood function p(y | x, m) (a probabilistic mapping from hidden states of the world, x, to sensory inputs y) with the prior p(x | m) (an a priori probability distribution of the world's states). Model inversion corresponds to computing the posterior p(x | y, m), i.e., the probability of the hidden states, given the observed data y. The posterior is a “compromise” between likelihood and prior, weighted by their relative precisions. The model evidence p(y | m) in the denominator of Bayes' theorem is a normalization constant that forms the basis for Bayesian model comparison—see main text. (B) Suitably specified and validated generative models with mechanistic (e.g., physiological or algorithmic) interpretability could be used as a computational assay for diagnostic purposes. The left graphics is reproduced, with permission, from Garrido et al. (2008). (C) Contemporary models of perception (the “Bayesian brain hypothesis”) assume that the brain instantiates a generative model of its sensory inputs. Perception corresponds to inverting this model, yielding posterior beliefs about the causes of sensory inputs. The globe picture is freely available from http://www.vectortemplates.com/raster-globes.php.

Generative modeling is an attractive approach for establishing differential diagnostic procedures. However, given the myriad of possible disease processes, guidance by clinical theories is crucial for the development of computational assays. The framework outlined in this paper is meant to inform the development generative models that infer mechanisms of fatigue and depression from fMRI and MEG/EEG data. The predictions by this framework suggest that differential diagnosis could be decisively facilitated by model-based estimates of directed synaptic connectivity (effective connectivity) within interoceptive circuits and their interactions with regions potentially involved in meta-cognition.

The diverse behavioral, cognitive and emotional facets of fatigue, its occurrence in numerous syndromatically defined diseases, and the multitude of findings from immunology, neurophysiology and psychology offer a large number of degrees of freedom for “bottom-up” explanations of this complex symptom (Dantzer et al., 2014; Patejdl et al., 2016). Given this complexity, investigating fatigue requires guidance by formal theories which provide top-down constraints on organizing and interpreting the diversity of experimental findings. These top-down constraints could be derived, for example, from theories about the purpose, structure and biophysical implementation of the brain's computations1. This strategy is at the heart of an emerging discipline, “Computational Psychiatry” (Montague et al., 2012; Stephan and Mathys, 2014; Friston et al., 2014b; Huys et al., 2016), and has shown promise in tackling other complex neuropsychiatric symptoms, such as delusions (Corlett et al., 2010).

Although its historical roots have rarely been discussed so far, Computational Psychiatry builds on seminal teleological theories of biological (and other) systems that provide fundamental constraints for any attempt of understanding brain function. These include, for example, general systems theory (Von Bertalanffy, 1969), cybernetics and control theory (Wiener, 1948; Ashby, 1954, 1956; Conant and Ashby, 1970; Powers, 1973; Carver and Scheier, 1982; von Foerster, 2003; Seth, 2015a,b,c) and constructivism (Richards and von Glasersfeld, 1979). Some core ideas from these general theories of inference and control in biological systems have laid the foundation for recent concepts of perception and action in computational neuroscience (e.g., Mumford, 1992; Dayan et al., 1995; Rao and Ballard, 1999; Friston, 2005, 2010; Friston et al., 2006; Doya et al., 2011). For example, the central notion of radical constructivism that the brain actively “constructs” a subjective reality from noisy and ambiguous sensory inputs (Richards and von Glasersfeld, 1979; von Foerster, 2003)—as opposed to the brain representing an objective outer reality that is reflected by sensory inputs—are expressed formally, using the language of probability theory, in hierarchical Bayesian models we encounter below. Other central ideas—such as the notion that cognitive systems are self-referential and monitor themselves (von Foerster, 2003) are yet to be exploited fully, e.g., for models of metacognition.

This paper represents a first attempt to use some of these principles for articulating a novel theory of fatigue and how it may transition to depression. In brief, our account views fatigue and depression as metacognitive phenomena: a set of beliefs held by the brain about its own functional capacity—specifically, a perceived lack of control over bodily states. This belief arises when attempts of homeostatic regulation fail to reduce the experience of chronic dyshomeostasis: enduring deviations between expected and sensed bodily states. These persistent deviations or prediction errors signal interoceptive surprise or, equivalently, low evidence of the brain's model of bodily processes. Before we can turn to this notion in more detail, we review some ideas on the role of perception (inference) and prediction (action selection) for homeostasis which originate from the longstanding literature mentioned above and have resurfaced in more recent work in computational neuroscience.

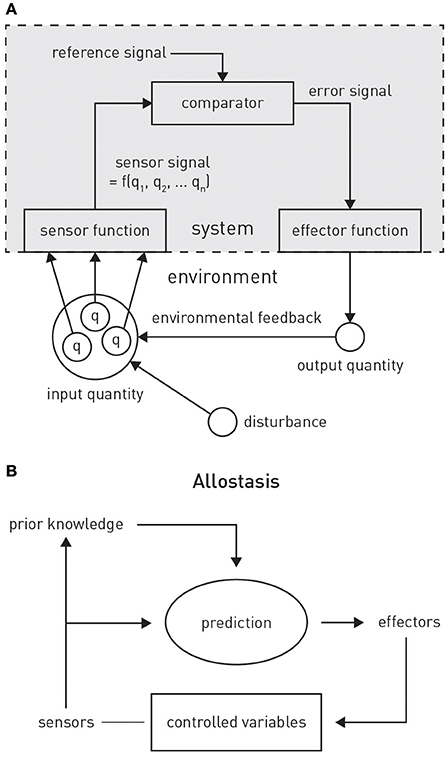

The brain is literally “embodied”: its structural and functional integrity depends on mechanical support, energy supply, and the provision of a suitable biochemical milieu provided by the body. As a corollary, the selectionist pressures which act upon the brain during evolution cannot be uncoupled from those acting upon the body's milieu intérieur (Claude Bernard); i.e., control of bodily homeostasis must constitute a primary purpose of brain function (Cannon, 1929). This control has long been known to involve reflex-like actions (comprising motor, endocrine, immunological, and autonomic processes) that are driven by feedback and the resulting “prediction error”—the discrepancy between an expected bodily state (a homeostatic setpoint2) and its actual level as signaled by sensory inputs from the body (Modell et al., 2015); see Figure 4. Feedback- or error-based reactive control has been studied for many vitally important variables (such as blood acidity, body temperature, blood levels of glucose and calcium, plasma osmolality) (Woods and Wilson, 2013), and the anatomy and physiology of neuronal circuits involved have been mapped out in detail by physiologists over many decades.

While this reactive type of control dominates the classical literature on homeostasis, it likely only represents the lowest layer in a hierarchy of temporally extended control mechanisms, with most immediate consequences. By contrast, assuming that the brain maintains a model of bodily states and the external environment, higher levels enable prospective control, with two essential components: inference (on current bodily state) and prediction (of its future evolution, on its own and in response to chosen actions) (Sterling, 2012; Penny and Stephan, 2014; Pezzulo et al., 2015; Seth, 2015a). There are several reasons why homeostatic regulation requires a model that enables inference (perception) and prediction (action selection). First, control is “blind” without perceptual inference: the brain does not have direct access to either bodily or environmental states, but has to infer them from sensory inputs which are inherently noisy and ambiguous. Disentangling the many external states that could underlie any given sensory input is an ill-posed inverse problem that requires constraints or regularization (e.g., by the priors of a generative model; Lee and Mumford, 2003; Kersten et al., 2004). Second, numerous experimental observations indicate that the brain engages in regulatory responses prior to a homeostatic perturbation, provided it can be anticipated (Sterling, 2012). In other words, a predicted deviation from a homeostatic setpoint is avoided by choosing suitable actions in advance. Importantly, setpoints are hierarchically structured, and changes in hierarchically lower setpoints may be necessary to prevent departure of bodily state from higher setpoints (Powers, 1973). For example, a temporary change in (lower) setpoints for blood pressure and catecholamine levels may be elicited to engage in fight-flight behavior that is necessary to ensure bodily integrity (higher setpoint). As we will see below, this longstanding notion of hierarchically structured homeostatic setpoints fits nicely to hierarchical Bayesian architectures, where the prior belief at one level is constrained by the prior belief at the next higher level.

This anticipatory control or allostasis (“stability through change”; Sterling, 2014) necessarily requires a model capable of generating predictions. The notion of model-based allostatic regulation is a special case of the more general and long-standing view that the brain requires a model of the external world in order to implement optimal control. Specifically, seminal work by Conant and Ashby (1970) has resulted in an influential theorem “[…] which shows, under very broad conditions, that any regulator that is maximally both successful and simple must be isomorphic with the system being regulated. […] The theorem has the interesting corollary that the living brain, so far as it is to be successful and efficient as a regulator for survival, must proceed, in learning, by the formation of a model (or models) of its environment.”

This notion of anticipatory homeostatic control (allostatic control) has important ramifications. Significant perturbations of bodily states arise from the physical and social environments through which the brain navigates the body. For example, basic properties of the physical environment (e.g., ambient temperature, weather, physical activity required by geographical conditions, availability of food and water) have delayed but severe effects on key homeostatic variables (such as body temperature, blood glucose levels, plasma osmolality); these must be predicted in advance and incorporated into the selection of actions in order to avoid fatal effects (for some simple simulations, see Penny and Stephan, 2014). Similarly, in the social domain, learning about the (potentially hostile) intentions of other agents in a reactive way, by trial and error, is risky. Instead, a model or “theory of mind” of other agents' mental states (Frith and Frith, 2012), perhaps grounded in the prolonged interaction with early-life caregivers, is required to predict and avoid interactions with potentially deleterious consequences for social status, access to resources, and ultimately bodily integrity. This means that anticipatory control of bodily states would be drastically incomplete if the brain did not possess a model which enabled inference on current states of the physical and social environment and predicted their trajectories into the future. In brief, principles of anticipatory homeostatic control and the necessity of model-based prediction must generalize beyond the body and apply to physical and social domains of the external world. In the following, the term “external world” is used to refer to both the body and the physical and social world outside the body; this is for notational brevity only and not meant to disregard differences in how bodily, social and physical states can influence brain activity in general and the emergence of fatigue in particular; a topic we return to below.

The notion that the brain maintains and continuously updates a model of its external world for perceptual inference and anticipatory control has been around for a considerable period (Conant and Ashby, 1970). What could such a model look like? Across various proposals, two main design features re-occur and are supported by strong theoretical and empirical arguments. That is, (i) the brain's model is likely to follow principles of probability theory and hence represent a “generative” model; and (ii) structurally, it is plausible to assume that this has a hierarchical structure.

A so-called “generative model” directly follows from the basic laws of probability theory and essentially implements Bayes' theorem—a simple but fundamental statement about how uncertain sources of information (represented by conditional probabilities) can be combined (Figure 1). In the context of perception, a generative model m combines a “likelihood function” p(y | x, m) (a probabilistic mapping from hidden states of the world, x, to sensory inputs y), with a “prior” p(x | m) (an a priori probability distribution of the world's states) (Figure 1). The likelihood describes how any given state of the world causes a sensory input with a certain probability; the prior expresses the range of values environmental states inhabit a priori and thus encodes learned environmental statistics. One way to understand why this model is called “generative” is to note that it can be used to generate or simulate sensory inputs (data): this simply requires that one samples a value from the prior distribution and plugs it into the likelihood function. This process can be turned around: that is, given some observed data (experienced sensory inputs), Bayes' theorem allows one to compute the probability of the hidden states (the “posterior” p(x | y, m))—this is inference:

As inference corresponds to inverting the process of data generation (from hidden states to sensory inputs), it is also referred to as “inversion” of the generative model, or solving the “inverse problem.” Finally, an important component of a generative model is the model evidence p(y | m) (the denominator from Bayes' theorem). The evidence represents a principled measure of the goodness of a generative model which trades-off accuracy and complexity (Stephan et al., 2009; Penny, 2012); notably, its logarithm relates to the information-theoretic concept of (Shannon) surprise, S (sometimes also referred to as surprisal or self-information to distinguish it from psychological notions of surprise). Specifically, the log evidence is identical to negative surprise about seeing the data under model m:

In other words, a good model is one that minimizes the surprise about encountering the data. Conversely, persistent surprise is the hallmark of a bad model.

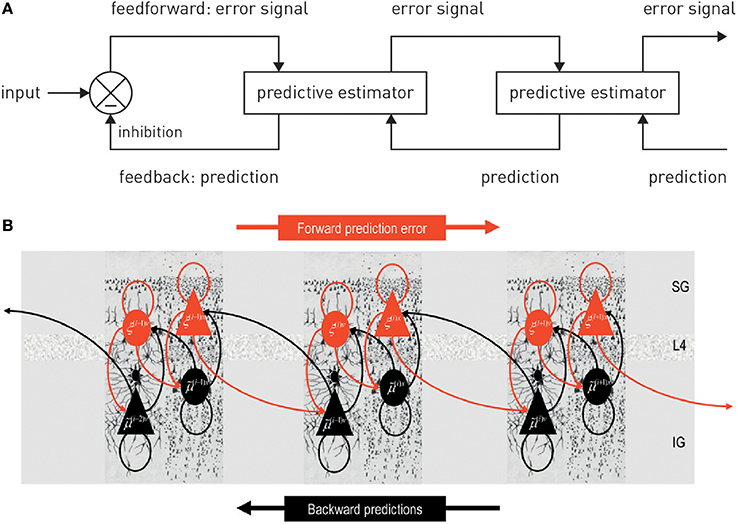

Generative models can be expressed in a hierarchical form, where each level provides a prediction (prior) for the state of the level below; this prediction can be compared against the actual state (likelihood), resulting in a prediction error which can be signaled upwards for updating the prior (Figures 2, 3). This is an extremely general concept which not only underlies common models in statistics (Kass and Steffey, 1989), but provides a key metaphor for models of brain function (e.g., Rao and Ballard, 1999; Lee and Mumford, 2003; Friston, 2005, 2008; Petzschner et al., 2015), such as predictive coding described below.

Figure 2. (A) A graphical summary of predictive coding. See main text for details. Figure reproduced, with permission, from Rao and Ballard (1999). (B) A possible neuronal implementation of predictive coding. See main text for details. Figure reproduced, with permission, from Friston (2008).

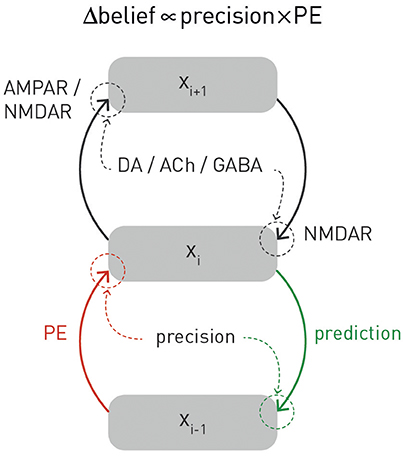

Figure 3. A graphical summary of computational and physiological key components of hierarchical Bayesian inference. Computationally, prediction errors are conveyed by ascending or forward connections, while predictions are signaled via backward or descending connections. Critically, both experience a weighting by precision. Physiologically, the currently available evidence suggests that, in cortex, prediction errors are signaled via ionotropic glutamatergic receptors (AMPA and NMDA receptors), predictions via NMDA receptors, while precision-weighting is either implemented through neuromodulatory inputs (e.g., dopamine or acetylcholine) or by local GABAergic mechanisms. The figure is adapted, with permission, from Stephan et al. (2016b).

The hierarchical form of generative models fits remarkably well to structural principles of cortical organization, where the sensory processing streams consist of hierarchically related cortical areas. This hierarchy is defined anatomically in terms of different cytoarchitectonic properties and types of synaptic connections (bottom-up/ascending/forward connections vs. top-down/descending/backward connections) (Felleman and Van Essen, 1991; Hilgetag et al., 2000). These connections are thought to have different functional properties which are compatible with hierarchical Bayesian inference. For example, in the visual system, anatomical and physiological studies suggest that descending connections convey predictions about activity in lower areas (e.g., Alink et al., 2010; Nassi et al., 2013; Vetter et al., 2015) and have largely inhibitory effects (e.g., Angelucci and Bressloff, 2006; Andolina et al., 2013), as required for “explaining away” in predictive coding (see the discussion in Nassi et al., 2013). Furthermore, pharmacological and computational studies of the auditory mismatch negativity (MMN) system have provided evidence for NMDA receptor dependent signaling of prediction errors via ascending connections (Wacongne et al., 2012; Schmidt et al., 2013). In summary, while definitive proof is outstanding, there is general consensus that ascending connections serve to signal prediction errors up the hierarchy, while predictions are communicated from higher to lower areas via descending connections (for reviews, see Friston, 2005; Corlett et al., 2009; Figure 3 provides an overview).

In the past two decades, theories of perception have converged on the idea that perception corresponds to inverting a hierarchical generative model of sensory inputs (Dayan et al., 1995; Rao and Ballard, 1999; Friston, 2005). In some sense, this idea is not new: more than a century ago, the physiologist Helmholtz already suggested that the brain would have to invert the process of how a visual image was generated in order to infer the underlying physical cause (perception as “unconscious inference”; Helmholtz, 1860/1962). The more recent formalization of this notion under principles of probability theory is commonly referred to as the “Bayesian brain” hypothesis (Friston, 2010; Doya et al., 2011). In addition to the reasons given above, the general idea of perception as inversion of a hierarchical generative model derives from numerous empirical observations and theoretical arguments. Here, we briefly summarize a few central points and point the interested reader to more detailed literature. First, the sensory inputs the brain receives are noisy and often show a non-linear dependence on states in the world; this introduces the need for regularization by prior expectations or knowledge (Friston, 2003; Lee and Mumford, 2003; Kersten et al., 2004). Second, it can be shown that the integration of uncertain sources of information according to principles of probability theory (Bayesian inference) is optimal; this implies that the brain should have evolved to implement perceptual inference in the way such that Bayesian inference is approximated (Geisler and Diehl, 2002). Third, a large body of psychophysical experiments indicate that basic perceptual judgements and multi-sensory integration show clear evidence for the operation of Bayesian inference (for overviews, Knill and Richards, 1996; Geisler and Kersten, 2002; Petzschner et al., 2015). Finally, a generative model not only supports inference, but also allows for predictions. This can be achieved in several ways, for example, predictions about future sensory inputs can be derived from the model's posterior predictive density, and predictions about future states of the world under a chosen action or goal can be derived from the model's posterior dynamics (for example, see Penny and Stephan, 2014).

This link from inference to prediction is important because it provides a basis for coupling perception to action; a fundamental basis for homeostatic control, as described above. Generally, the challenge of control is framed by asking, informed by an estimate of the current state of the world (and possibly a prediction how it evolves), what action optimizes a particular criterion (a “utility function” or “cost function”). One framework to address this challenge is Bayesian decision theory (Körding, 2007; Dayan and Daw, 2008; Daunizeau et al., 2010). In a nutshell, this identifies an optimal action as one that maximizes the “expected utility” (where “expected” refers to a weighted average; i.e., the predicted outcomes are weighted by their relative uncertainty). The definition of utility, however, is not trivial. One common choice is to define utility in relation to “rewards.” This, however, only shifts the problem and raises the question what constitutes “reward” for the brain (compare the discussion in Friston et al., 2012). From a homeostatic perspective, the utility or reward afforded by a particular action depends on four estimates based on inference and prediction:

• an estimate of the current bodily state (interoception);

• an estimate of the current environmental state (exteroception);

• a prediction of how these states would evolve in time (provided by a model of bodily and environmental dynamics);

• and a prediction to what degree the action considered will keep bodily state close to a homeostatic setpoint over time (allostatic control).

Current models of decision-making do not incorporate all of these aspects, and first attempts of accounting for homeostasis and allostasis in formal models of decision-making have only surfaced relatively recently (e.g., Keramati and Gutkin, 2014; Penny and Stephan, 2014; Pezzulo et al., 2015).

Importantly, perception and action do not operate in isolation, nor is there a unidirectional dependency of action on perception. Any chosen action changes the world (and/or the way the brain samples it3) and hence the feedback the brain receives in terms of new sensory inputs. This sensory feedback (likelihood function) is combined with the current prior belief (prediction) held by the agent, resulting in a belief update about the state of the world (posterior probability) which, in turn, can inform new actions. This closes the loop from perception to action.

In summary, this section discussed homeostatic and allostatic control as fundamental objectives for the brain and reviewed long-standing concepts that highlight the importance of closed loops of perception and action. In particular, we have emphasized the notion that homeostatic control is not simply reactive, but proactive or anticipatory, and rests on a model of the external world which includes both the body and the influences it may receive from physical and social domains of the environment. Of course, these ideas raise the question how predictive models of this sort may actually be implemented by the brain. This question has been addressed by several recent theories, usually with a focus either on the body (Seth et al., 2011; Seth, 2013, 2015a; Feldman-Barrett and Simmons, 2015) or its environment (Rao and Ballard, 1999; Friston, 2005). In the next section, we review two classes of theories—predictive coding and active inference—which have recently begun to find application to questions of interoception and homeostasis.

Predictive coding is a long-standing idea about neural computation that was initially formulated for information processing in the retina (Srinivasan et al., 1982). In its current form, predictive coding postulates that perception rests on the inversion of a hierarchical generative model of sensory inputs which reflects the hierarchical structure of the environment and predicts how sensory inputs are generated from (physical) causes in the world (Rao and Ballard, 1999; Friston, 2005). By inverting this model, the brain can infer the most likely cause (environmental state) underlying sensory input; this process of inference corresponds to perception. At any given level of the model, it is the (precision-weighted, see below) “prediction error” that is of interest—the deviation of the actual input from the expected input. Prediction errors signal that the model needs to be updated and thus drive inference and learning.

Anatomically, models of predictive coding are inspired by the remarkably hierarchical structure of sensory processing streams in cortex, where the laminar patterns of cortical-cortical connections define their function as ascending (forward or bottom-up) or descending (backward or top-down) connections and establish hierarchical relations between cortical areas (Felleman and Van Essen, 1991; Hilgetag et al., 2000). Computationally, the key idea of predictive coding is that cortical areas communicate in loops: Each area sends predictions about the activity in the next lower level of the hierarchy via backward connections; conversely, the lower level computes the difference or mismatch between this prediction and its actual activity and transmits the ensuing prediction error by forward connections to the higher level, where this error signal is used to update the prediction (Figures 2, 3). This recurrent message passing takes place across all levels of the hierarchy until prediction errors are minimized throughout the network. In the words of Rao and Ballard (1999): “[…] neural networks learn the statistical regularities of the natural world, signaling deviations from such regularities to higher processing centers. This reduces redundancy by removing the predictable, and hence redundant, components of the input signal.” This is a computationally attractive proposition because it satisfies information-theoretical criteria for a sparse code (Rao and Ballard, 1999).

In this scheme, minimizing prediction errors under the predictions encoded by the synaptic weights of backward connections in the hierarchy corresponds to hierarchical Bayesian inference and allows for computing the posterior probability of the causes, given the sensory data. Notably, plausible neuronal implementations exist which are compatible with known neuroanatomy and neurophysiology (Friston, 2005, 2008; Bastos et al., 2012); for a beautiful tutorial introduction (see Bogacz, in press).

A notion closely related to predictive coding is the idea that layers of hierarchical generative models may not predict the state of the next lower level, but its temporal evolution. This is known as hierarchical filtering and emphasizes the importance of taking into account the volatility of the environment, i.e., the temporal instability of its statistical structure, such as the probabilities by which one event causes another (Behrens et al., 2007; Mathys et al., 2011). Here, a hierarchical generative model combines a lower layer with value prediction errors about environmental variables with upper layers where volatility prediction errors drive inference and learning (Mathys et al., 2014). One concrete implementation of this idea is the hierarchical Gaussian filter (HGF; Mathys et al., 2011, 2014) which allows one to estimate subject-specific parameters encoding an individual's approximation to Bayes-optimal hierarchical learning.

One property of hierarchical Bayesian models deserves particular emphasis. This is the fact that under broad assumptions (i.e., for all distributions from the exponential family; Mathys, 2016), hierarchical Bayesian belief updates have a generic form with remarkably simple interpretability: at any given level i, belief updates Δμi are proportional to the prediction error (sent from the level below) but weighted by uncertainty or, more specifically, a precision ratio (Figure 3). This ratio denotes the relation between the estimated precision of the input from the level below (e.g., signal-to-noise ratio of a sensory input) and the precision of the prior belief. For example, in the case of the HGF, this takes the following form:

Here, the numerator of this precision ratio represents the expected precision of the input from the level below (i.e., the agent's estimate of signal-to noise ratio of the input), whereas the denominator encodes the precision of the current belief. That is, the impact of prediction error on a belief update is smaller the more precise (less uncertain) the prior belief and larger the more precise (higher signal-to-noise) the input from the level below. Evidence from anatomical and physiological studies has established bridges between the computational and physiological components of this hierarchical precision-weighted message passing: prediction error signaling via forward connections likely rests on glutamatergic (AMPA and NMDA) receptors, prediction signaling via backward connections probably exclusively on NMDA receptors, while precision-weighting is assumed to draw on mechanisms which modulate postsynaptic gain, such as neuromodulatory (e.g., dopamine or acetylcholine) or local GABAergic inputs (Friston, 2009; Corlett et al., 2010; Adams et al., 2013b); for a summary, see Figure 3.

Predictive coding represents one particular instantiation of the Bayesian brain hypothesis that represents an attractive foundation for studying interoception (Seth, 2013). However, predictive coding is limited to perception and does not directly speak to action selection and control, which is of fundamental importance for homeostasis. However, the link between perception and action can be studied in the framework of related theories which share the core ideas of predictive coding but generalize it to action selection; these include perceptual control theory (PCT; Powers, 1973, 1978; and active inference Friston, 2009; Friston K. J. et al., 2010).

PCT originated from the control theoretic principles of cybernetics (Wiener, 1948) and cognitive theories emphasizing the self-referential structure of the brain, such as radical constructivism (Richards and von Glasersfeld, 1979; von Foerster, 2003). The central premise is that any adaptive system tries to control certain quantities in the environment, q, that are essential for the system's existence and survival (Figure 4A). Critically, as it can only infer the value of q through perception, controlling q amounts to ensuring that the sensory inputs reflecting q remain at the desired (expected) level. In other words, the system will resist any external perturbations or disturbances by eliciting appropriate actions that restore the expected sensory input. This control can be exerted by the classical negative feedback loop of cybernetics (Figure 4A), where an internal reference (setpoint or goal signal) is compared to incoming sensory input reflecting the state of q. The resulting mismatch or prediction error serves to elicit actions which restore q to the expected value. In Powers' (1973) words: “The reference signal is a model [our emphasis] inside the behaving system against which the sensor signal is compared: behavior is always such as to keep the sensor signal close to the setting of this reference signal.”

Figure 4. (A) Principles of classical feedback control. Figure is reproduced, with permission, from Powers (1973). (B) A graphical summary of allostasis and its dependence on predictions about future bodily states. Figure is reproduced, with permission, from Sterling (2012).

Critically, PCT postulates that control systems are, in many cases, structured hierarchically, where the “action” of higher systems consists of providing the reference or goal signal for lower systems. As a consequence, in order to reach a high-order goal, the relevant systems level (say i) does not need to directly access any actuators or specify a chain of commands; all it has to do is to alter the reference signal for the next lower system i −1. This will adjust the output from i −1 and thus the reference signal for the next lower system i −2, and so forth, until a level is reached whose output drives actuators and thus impacts on the environment. Intriguingly, nowhere in this chain of downward changes is the actual behavioral act specified; it is only the goals (expected sensory inputs) that are re-specified at each level of the hierarchy when the sensed environmental state does not correspond to the goal state (reference signal) at any levels of the hierarchy. In a nutshell, “…control systems control what they sense, not what they do.” (Powers, 1973; his emphasis).

PCT was formulated at a time when neither the hierarchical structure of the human brain was well understood, nor when Bayesian ideas of perception had been well developed. These concepts have informed a more general framework—active inference (Friston, 2009; Friston K. J. et al., 2010)—which, although not directly building on PCT, shares its fundamental notion that control is hierarchically organized and directed toward sensory input, not motor output. Active inference derives from the free energy principle (Friston et al., 2006; Friston, 2009, 2010) which postulates that biological agents strive to minimize surprise about their sensory inputs. In the general case, however, this requires integrating over all possible hidden states of the world, a computationally intractable problem. A solution is provided by a more easily computable quantity called “free energy” which represents an upper bound on surprise. A free energy minimizing system thus corresponds to a system which experiences minimum surprise about its sensory inputs. This notion is similar to the functional principle underlying PCT, but is formulated in terms of probability theory and thus tied closely to inference and generative models. The free energy principle has found numerous applications to cognition, suggesting efficient algorithms for how perceptual inference and learning can be implemented by a hierarchical generative model that maps onto the known neuroanatomy and neurophysiology of the cortex (Friston et al., 2006; Friston, 2008, 2009; Bogacz, in press).

Notably, the brain could reduce free energy in two major ways (Friston, 2009): (i) by updating its beliefs or expectations; this corresponds to adjusting its generative model of sensory inputs, as postulated by predictive coding; or (ii) by selecting those actions which lead to sensory inputs that are in accordance with the brain's expectations; this is active inference. Simply speaking, active inference suggests that predictions (prior expectations) about sensory inputs define preferences or goals that engender behavior (Friston et al., 2015). Similar to PCT, prior expectations at a high-level in the hierarchy define a set point against which current sensory input is evaluated and actions are automatically elicited by lower levels initiated until any mismatch is eliminated. That is, actions arise from a hierarchical cascade of changes in expectations that eventually lead to reflex-like motor behavior at the lowest level in order to yield the expected sensory input (Adams et al., 2012, 2013a; Friston et al., 2015).

Hierarchical Bayesian theories have begun to play an influential role in the treatment of interoception and homeostatic control. Although not specifying a particular computational mechanism, a seminal paper by Paulus and Stein (2006) highlighted the importance of predictive processes for understanding interoception and its role in psychopathology, specifically anxiety. More recently, several proposals have linked interoception and homeostatic/allostatic control to predictive coding and active inference (Seth et al., 2011; Gu et al., 2013; Seth, 2013; Feldman-Barrett and Simmons, 2015).

While these proposals have remained unspecific about the exact implementation of active inference for allostatic control, they have incorporated anatomical and physiological knowledge about the neuronal circuits for interoception and homeostatic control (for reviews, see Saper, 2002; Craig, 2002, 2003; Critchley and Harrison, 2013). Viscerosensory information about a wide range of bodily states—including bodily integrity (pain, inflammatory mediators), cardiovascular (e.g., blood pressure, oxygenation), humoral (e.g., plasma osmolality), physical (e.g., body temperature), metabolic (e.g., levels of glucose and hormones like insulin, ghrelin, leptin), immunological (e.g., cytokines), or mechanical (e.g., dilation of internal organs) properties—reaches the brain via three main channels: visceral afferents that enter the spino-thalamic tract via spinal cord lamina 1, cranial nerves IX (glossopharyngeal) and X (vagus), and humoral information which is sensed by circumventricular organs and specialized hypothalamic neurons situated outside the blood-brain barrier. These channels reach the thalamus (ventroposterior and ventromedial nuclei)—either directly or indirectly via brain stem nuclei including the nucleus of the solitary tract, parabrachial nucleus, and periaqueductal gray—and eventually target the viscerosensory cortex. The latter essentially comprises posterior and mid-insular cortex which represent a viscerotopic map of bodily state with respect to numerous physiological variables (Cechetto and Saper, 1987; Allen et al., 1991; Craig, 2002). Their efferent connections convey information about bodily state to cortical visceromotor areas—such as anterior insular cortex (AIC), anterior cingulate cortex (ACC), subgenual cortex (SGC), and orbitofrontal cortex (OFC)—which, in turn, send projections to hypothalamus, brainstem and spinal cord nuclei (Mesulam and Mufson, 1982; Hurley et al., 1991; Carmichael and Price, 1995; Freedman et al., 2000; Chiba et al., 2001; Vogt, 2005; Hsu and Price, 2007) in order to control autonomic, endocrine and immunological reflex arcs.

Based on this general anatomical layout, several computationally inspired proposals have been put forward, although so far without mathematically concrete implementations. Seth et al. (2011) conceptualized interoception as a predictive coding process combined with corollary discharge. In their concept, the AIC was assigned a central role as comparator (Gray et al., 2007) receiving corollary discharges (efference copies) of autonomic control signals from visceromotor regions like the ACC. Subsequent formulations based on active inference no longer understood autonomic control signals originating from visceromotor regions as “commands,” but as predictions of bodily states which are fulfilled by autonomic reflexes implemented by lower (hypothalamic and brainstem) centers (Gu et al., 2013; Seth, 2013; Feldman-Barrett and Simmons, 2015; Pezzulo et al., 2015).

In the following, we build on and extend the above models to formulate a theory of fatigue that connects hierarchical Bayesian inference to metacognition (cognition about cognition). Specifically, we unpack and extend a mechanism proposed recently as part of a list of priority problems for psychiatry: “With respect to fatigue, can we identify distinct patient subgroups in whom the brain's model of interoceptive inputs signal constant surprise because of persistent violation of fixed beliefs (homoeostatic setpoints) regarding metabolic states or bodily integrity and in whom this enduring dyshomoeostasis induces high-order beliefs about lack of control and low self-efficacy?” (Problem 8 in Stephan et al., 2016a). In the following, we describe how homeostatic regulation can be regarded as a problem of hierarchical Bayesian inference and control, not dissimilar to previous accounts but with three novel aspects: (i) an explicit discussion of how conventional homeostatic concepts can be transformed into Bayesian counterparts, including an extremely simple but concrete illustration of how active inference could mediate homeostatic control; (ii) the extension of active inference to a formal definition of allostatic control; and (iii) the addition of a metacognitive layer to the interoceptive hierarchy.

The conventional cybernetic view of homeostasis regards the brain's task as ensuring that its carrier (the body) inhabits a limited number of states which are compatible with survival, for example, being within a narrow range of body temperature or blood oxygenation. From a control theory perspective, this amounts to keeping sensory signals of bodily state close to setpoints, which are defined by a reference signal that is provided to a comparator unit (compare the traditional cybernetic circuit implementing feedback control in Figure 4A).

We now formulate this circuit and its components in a way that provides a basis for extending homeostatic to allostatic control. Under a Bayesian perspective, homeostatic setpoints can be defined as the expectations (means) of prior beliefs about the states the body should inhabit. These prior beliefs are instantiated biophysically by “hard-wired” local circuits in “effector regions” that control homeostatic reflex arcs, such as the hypothalamus, brain stem nuclei like the periaqueductal gray (PAG), and autonomic cell columns in the spinal cord (Craig, 2003). The “homeostatic range” (of values compatible with life) are reflected by prior variance: priors of vitally important variables (such as blood oxygenation) are extremely tight (low variance), whereas other beliefs (e.g., about blood pressure) can afford being considerably wider (high prior variance). Notably, all of these beliefs are subject to evolutionary pressure: depending on how well they support homeostasis under the conditions of a given environment and thus maintain bodily integrity and survival, they (the neuronal structures encoding them, respectively) will be more or less likely selected out.

Given this notion of a homeostatic setpoint as a belief about physiological states the body should inhabit, one can now formulate a basic homeostatic reflex arc in Bayesian terms (Figure 5), including its control by higher-order centers (allostasis). Specifically, we consider how a particular physiological state x can be controlled by actions elicited by a neuronal homeostatic reflex arc, e.g., in the hypothalamus or a brain stem nucleus, ensuring that x is kept within a homeostatic range (prior belief about its value). A key property of this formulation is that corrective action is more vigorous or rapid the higher the deviation of bodily states from prior expectations and the more precise these expectations (the tighter the homeostatic range). For clarity and simplicity, we only consider a very basic scenario here. While our approach is inspired by more general and sophisticated treatments of active inference formulated under the free-energy principle (Friston K. J. et al., 2010), the following derivation presents, to our knowledge, a first mathematically concrete proposal of an active inference mechanism for homeostatic reflexes under allostatic control. We emphasize that the following model is by no means complete, but should be seen as a mere starting point for developing generative models of allostatic control and metacognitive evaluation.

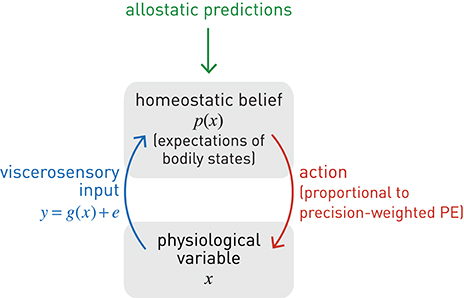

Figure 5. A graphical summary of a homeostatic reflex arc and its modulation by allostatic predictions. Blue lines: sensory inputs; red lines: prediction errors; green lines: predictions.

Let us initially begin from the perspective of perceptual inference as Bayesian belief updating, i.e., how one could determine the most likely value of bodily state x, given noisy sensory input which is sampled sequentially. Here, we examine the simplest case where x is assumed not to evolve or experience any perturbations over the period of observation. While, in this context, x is thus a constant state of the body, the brain's belief about x is updated sequentially, based on noisy sensory inputs (Because of this subtle difference, in the following few paragraphs on perception, we write the bodily state x as a time-invariant variable while mean and variance of the belief about x are time-dependent. In subsequent paragraphs on action, the opposite is the case). This belief can be described, for example, as a normal distribution with mean μt and precision (inverse variance) πt at time t:

At any time t, viscerosensory input y results from some form of neuronal coding (transformation) g of x and is affected by inherent noise of the sensory channel (with constant precision πdata):

or, equivalently:

In this context, the goal of perceptual inference would be to infer on the value of x given repeated samples of the noisy viscerosensory signal y. This corresponds to updating ones' estimates of the sufficient statistics of x (μt and πt), where the estimate at time t serves as the prior for the next belief update (“today's posterior is tomorrow's prior”). That is, using Bayes' theorem, one can sequentially transform a prior belief into a posterior belief , based on new sensory data yt. Specifically, this sequential belief update would obey the following simple rule (see Mathys, 2016 for details):

Here, the posterior mean results from updating the current estimate (prediction or prior mean) with the precision-weighted prediction error—where the latter corresponds to the difference between the actual sensory signal yt and its predicted value, g(μt). The precision-weighting is critical because it renders the correction or update sensitive to the properties of both the sensory channel and the prior belief: the belief update is more pronounced the higher the estimated precision of the sensory input and the lower the precision of the prior.

We can now use the same type of precision-weighted prediction error for influencing x, instead of inferring or sensing it. In other words, we turn the perceptual update rule of Equation 7 into a control rule, based on two simple considerations. First, to fix the setpoint for the homeostatic reflex, we clamp the prior belief: ∀t:μt = μprior, πt = πprior. This effectively corresponds to delta function (hyper)priors on the sufficient statistics of the prior belief (see Equation 8). Equation (7) shows that this can be achieved by simply ignoring the sensory information (more formally: setting the data precision to zero). Second, we define an action or effector function whose driving force is the prediction error under expected homeostasis; in other words, the difference between the actual sensory input y and the sensory input that would be expected at the homeostatic setpoint (μprior). This prediction error can be derived from the log evidence of a model mH which expects bodily state to be in homeostasis [and therefore the viscerosensory input to equal g(μprior)]. This is the case when the sufficient statistics of the marginal likelihood are given by the homeostatic setpoint μprior and homeostatic range (where c absorbs constant terms):

Notably, this is the negative (Shannon) surprise S of seeing the data under the expectation of homeostasis (compare Equation 2):

According to Equation (8), minimizing the precision-weighted squared prediction error thus minimizes the interoceptive surprise S about the sensory inputs. This requires actions that make x maximally congruent with the homeostatic setpoint and hence maximize log evidence L. This can be achieved by defining action4 as the gradient of the log likelihood with regard to x (under application of the chain rule and noting, from Equation (6), that ):

and using it to smoothly adjust the value of the physiological variable x:

Put differently, the chosen action a induces a gradient descent of x on interoceptive surprise:

Here, λ is a time constant matched to the time scale at which action can affect x (for example, a slow time constant for hormonal regulation by the hypothalamus, or a very fast time constant for cardiovascular regulation via the baroreceptor reflex). Furthermore, for generality, Equation (11) includes a mapping f from action to changes in x. This could be non-linear and probabilistic to account for noise in motor processes (compare the analogous sensory mapping g in Equation 6). The advantage of a probabilistic formulation is that it allows for considering “action precision,” i.e., the confidence with which an action would have the desired effect on the physiological variable; this will be examined in future work. In the present simulation shown in Figure 6, we have kept f maximally simple (a deterministic identity function).

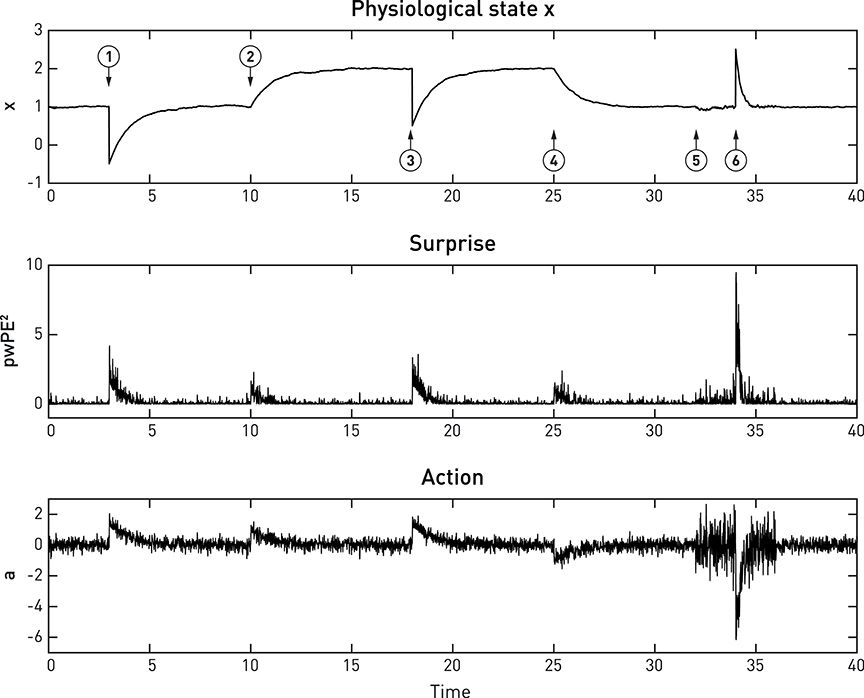

Equations (8)–(12) specify how the effector emits actions that move x toward its setpoint and minimize precision-weighted prediction error and thus interoceptive surprise (see middle and lower panels in Figure 6). This makes the action signal progressively diminish toward zero as x asymptotes its setpoint. Notably, the vigor or speed of action depends on both the current prediction error (discrepancy between the sensory feedback signal and its desired/predicted level), and the precision of the prior homeostatic belief. This means that for vitally important physiological variables whose homeostatic ranges are very tight, corrective actions are necessarily rapid. Conversely, when physiological variables diverge from setpoints, the experience of dyshomeostasis (i.e., the magnitude of prediction error) is much more pronounced when prior homeostatic beliefs are tight. Both properties are illustrated by the simple simulation shown in Figure 6.

Figure 6. A simulated example of allostatic regulation of homeostatic control, based on Equations (8)–(12). The upper panel shows the temporal evolution of a fictitious physiological state x (Equation 11) which is affected by environmental perturbations (➊,➌,➏; all with a magnitude of 1.5). The middle and lower panels display an approximation to interoceptive surprise—i.e., squared precision-weighted prediction error (pwPE2; compare last line of Equation 8)—and the associated action signal (Equation 10), respectively. Following the timeline from left to right, the homeostatic setpoint or belief is initially specified with a prior mean and prior precision of 1 each. Please note that even before the first perturbation (➊) occurs, sensory noise (zero mean, 0.25 standard deviation) leads to ongoing actions of minute amplitude which lead to (very small) deviations of x from the setpoint. Following a first perturbation (➊), the homeostatic reflex arc emits corrective actions that are proportional to precision-weighted viscerosensory prediction error (middle panel). As the actions are successful, x returns to setpoint and viscerosensory prediction error decays. ➋ indicates the beginning of allostatic control: here, the prediction of imminent future perturbations (by some generative model not specified here) leads to an anticipatory rise in the homeostatic setpoint (a shift in the prior mean to 2). As a consequence, in the absence of any change in sensory input, actions are elicited to change the value of x to the new setpoint. This ensures that the following perturbation ➌ does not bring x anywhere near the critical threshold. At ➍, a safe period is predicted, and allostatic control resets the homeostatic setpoint (prior mean) to 1. At ➎, another perturbation in the near future is being predicted, however, this time the direction of the perturbation is uncertain. Therefore, changing the mean or setpoint is not a viable option and allostatic control takes a different form: instead of changing prior mean, the prior precision of the homeostatic belief is increased from 1 to 4. As a consequence, when a perturbation occurs at ➏, this yields a considerably larger precision-weighted prediction error and hence greater interoceptive surprise (see lower panel), leading to a significantly more rapid corrective action (compare the slope of signal rise between ➊ and ➏), putting the agent at less risk, should another perturbation occur shortly after ➏. It is also noteworthy that the increased prior precision enhances the effect of sensory noise (compare the roughness of the three signals just prior to ➊ and ➏, respectively).

The above equations illustrate a key principle of active inference: the choice between reducing prediction error through changing predictions (updates of the generative model) or through action depends on precision. For example, reducing the precision of sensory input (πdata in Equation 7) disables belief updates while action (Equation 10) remains unaffected. Similarly, increasing the precision of predictions or prior beliefs (πt in Equation 7 or πprior in Equation 8) abolishes belief updating while action is increased in proportion to the increase in precision. In other words, a modulation of precision of top-down predictions is sufficient to switch from learning to acting.

This section has outlined a Bayesian account of homeostatic control. Equations (8)–(12) illustrate the role of prior beliefs for implementing setpoints in homeostatic reflex arcs where actions minimize prediction errors (and hence interoceptive surprise) in order to fulfill a prior belief that physiological state x should be within a particular range. This represents a simple but concrete implementation of active inference in the context of homeostatic control. Perhaps most importantly, dyshomeostasis can now be defined formally as a persistent deviation from precise prior expectations about bodily state that is indexed by chronically elevated surprise about viscerosensory inputs; or equivalently, as high entropy (average surprise) of viscerosensory channels.

One might note that entropy minimization by homeostatic control might constitute a violation of the second law of thermodynamics (that all systems monotonically increase their entropy over time). However, the second law of thermodynamics only applies to closed systems; by contrast, biological organisms represent open systems which exchange energy and information with their environment and are capable of decreasing entropy—at least temporarily (Von Bertalanffy, 1969). This is the very nature of homeostatic regulation: to maintain the body in a highly particular (low entropy and hence unlikely) condition.

A critical extension of the above scheme for homeostatic control is to allow higher-order goals or predictions to alter the homeostatic belief p(x). This amounts to allostasis: the proactive deployment of behavior, guided by predictions from a model, in order to avoid dyshomeostatic future states (Sterling, 2012; Figure 4B). For example, prolonged exposure to intense sunlight will not only cause immediate (e.g., increase in body temperature) but also delayed (e.g., dehydration) perturbations of homeostasis. Provided the brain is equipped with a generative model for predicting the evolution of environmental and bodily states, based on previous experience, it can take proactive actions and avoid dyshomeostatic states before they arise. Importantly, these homeostatic goals often have a hierarchical structure, where temporary deviations from homeostatic setpoints are tolerated or even induced in order to ensure that higher-order homeostatic goals can be reached in the future. For example, under a model that predicts a possible encounter with a hostile agent in a specific context, anticipatory deviations from hormonal and cardiovascular setpoints are induced to prepare for future fight-flight behavior.

From the perspective of theories like PCT or active inference, an efficient way of accomplishing hierarchical control is to temporarily alter the setpoint or prior belief of the relevant homeostatic reflex arc (for example, changing the belief about desirable plasma osmolality elicits drinking behavior before dehydration reaches a critical level). This relies on higher brain structures with three properties: (i) access to estimates of bodily state (interoception), (ii) capable of generating predictions over longer time scales, and (iii) with anatomical connections that can modulate the homeostatic beliefs which reflex arcs in regions like the hypothalamus or brain stem serve to fulfill. Neuroanatomically, regions that are in a position to modulate homeostatic reflex arcs through allostatic predictions are likely situated at the top of the interoceptive hierarchy and include the AIC, ACC and subgenual cortex; this is discussed in more detail below.

The hierarchical (top-down) modulation of reflex arcs by predictions means that (homeostatic) beliefs about desirable bodily states in the present become dependent on (allostatic) beliefs about bodily states in the future. This essentially turns homeostatic beliefs into time-varying quantities under the influence of higher allostatic predictions ϕi(t). This belief transformation could affect either the mean and/or the precision of the homeostatic belief across time:

Figure 6 shows a very simple simulation which illustrates both types of allostatic control. Here, a physiological variable x is driven away from its setpoint (the agent's homeostatic belief) three times, due to environmental perturbations. Each time a “Bayesian reflex” restores homeostasis according to Equations (8)–(12). Critically, following the first incident (➊ in Figure 6), higher levels of the system (not modeled here) predict further perturbations of a particular direction and allostatic control is exerted by shifting the mean (setpoint) into the opposite direction while leaving the precision of the homeostatic belief unaffected (➋). As a consequence, x rises to the new expected level. Note that this occurs without specifying the action; instead, the action follows automatically once a new belief or setpoint has been adopted. At ➎, perturbations are predicted, but with uncertainty about their direction; hence shifting the setpoint or mean is not a viable option. Instead, the precision of the homeostatic belief is increased, leading to a smaller range of tolerated deviations in either direction. The subsequent response to a perturbation (➏) leads to a far swifter restorative response than after the first perturbation (➊).

Here, we only provide a general frame for implementing allostasis from an active inference perspective; the specific form for the modulation of homeostatic beliefs by allostatic predictions is likely to vary across physiological variables, as these are controlled on different time scales and may draw on predictions from different generative models. Generally, however, we note that the frame suggested by Equation (13) is consistent with the PCT notion of control where hierarchically higher levels set the reference points for lower levels (Powers, 1973). It is also similar in structure to active inference accounts of motor control where primary motor cortex is assumed to modulate spinal reflex arcs through ascending connections to α and γ motor neurons, “programming” motor trajectories via predictions about future proprioceptive input (Adams et al., 2013b).

In summary, under the hierarchical Bayesian view presented above, homeostatic and allostatic control, respectively, can be understood as active inference about bodily states on different time scales: actions (of a motor, autonomic, endocrine, or immunological sort) are selected which fulfill beliefs about current and future bodily states and reduce the average surprise (entropy) of viscerosensory channels over time (the time scale of the respective allostatic goal). Notably, this entropy-reducing principle may not only be in operation during the lifetime of an organism, but has also been suggested as the driving force behind the evolution of homeostatic mechanisms (Woods and Wilson, 2013).

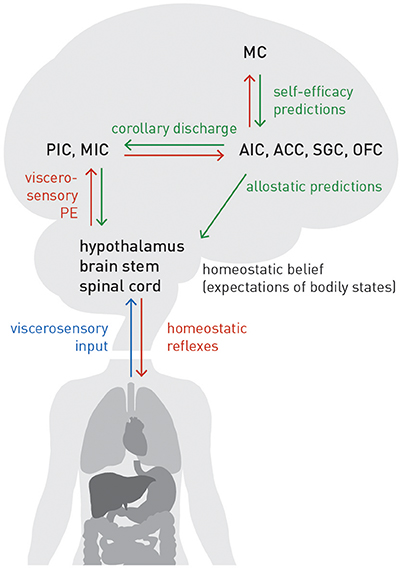

The extension from homeostatic to allostatic control highlights that interoception and homeostatic regulation are inevitably linked and form a closed loop: tuning the setpoints of homeostatic reflex arcs depends on accurate allostatic predictions about future bodily states; these predictions, in, turn depend on accurate inference about current bodily states. Figure 7 summarizes the neuroanatomy of a proposed circuit for integrating the afferent (interoceptive) and efferent (control) branches of homeostatic/allostatic regulation. This anatomical layout is not dissimilar to a previous proposal by Feldman-Barrett and Simmons (2015) but introduces several novel aspects (e.g., a metacognitive layer) and distinguishes interoception, allostatic predictions and homeostatic reflex arcs more explicitly.

Figure 7. A proposed circuit for interoception and allostatic regulation of homeostatic reflex arcs, together with a metacognitive layer (MC). See main text for details. Blue lines: sensory inputs; red lines: prediction errors; green lines: predictions.

In our proposal, AIC, ACC, subgenual cortex (SGC), and orbitofrontal cortex (OFC)—regions we refer to as “visceromotor areas” (VMAs) as a set—are situated at the top of this circuit, embodying a generative model of (potentially different types of) viscerosensory inputs that enables a biological agent to infer on current bodily states and predict future states, as a basis for allostatic predictions. This assumption is supported by known anatomical connections and their hierarchical relations based on laminar patterns of origin and target: tract tracing studies in the Macaque monkey (Mesulam and Mufson, 1982; Mufson and Mesulam, 1982; Vogt and Pandya, 1987; Carmichael and Price, 1995) demonstrated that VMAs receive ascending projections from viscerosensory cortex (posterior and mid-insula). As in circuits supporting exteroception, these ascending connections are thought to signal prediction errors (Seth et al., 2011; Gu et al., 2013; Seth, 2013; Feldman-Barrett and Simmons, 2015). On the other hand, according to tract tracing studies in monkeys and rats (Mesulam and Mufson, 1982; Hurley et al., 1991; Carmichael and Price, 1995; Freedman et al., 2000; Chiba et al., 2001; Vogt, 2005; Hsu and Price, 2007), the visceromotor areas possess connections targeting hypothalamus, brain stem nuclei and spinal cord (partially relayed by amygdala, periaqueductal gray (PAG), and basal ganglia). These connections are thought to convey allostatic predictions which modulate the setpoints of homeostatic reflexes, as described above. Importantly, descending projections from visceromotor areas could send the same prediction to posterior and mid-insula; this effectively serves as efference copy or corollary discharge against which viscerosensory inputs can be compared. The resulting prediction errors are returned via ascending connections to visceromotor areas, allowing for (presumably slow) adjustment of allostatic predictions.

Several sources of uncertainty need to be highlighted here. First, the specific roles and division of labor amongst VMAs are largely unclear; for the moment, we have grouped them together without any differentiation. Second, non-trivial species differences in the neuroanatomy of interoceptive circuitry exist. For example, SGC targets different autonomic effector regions in rodents and monkeys (Hurley et al., 1991; Freedman et al., 2000), and it has been questioned whether AIC and ACC in monkeys and humans are functionally equivalent (Critchley and Harrison, 2013). Third, the present circuit model ignores the fact that AIC, ACC, and OFC each consist of several anatomically distinct subfields. For example, even within agranular insular cortex of the Macaque monkey, subareas exhibit differential connectivity and may possess a hierarchical relation amongst themselves (Carmichael and Price, 1996). Finally, our present model assumes that effector regions (hypothalamus, brain stem, spinal cord), which receive allostatic predictions from VMAs, do not return prediction errors via ascending connections. This serves to ensure that allostatic predictions are fulfilled by actions, instead of these predictions being revised by prediction errors. This fits well to the agranular cytoarchitectonic nature of VMAs, i.e., the absence of a well-formed layer IV which represents a key target lamina for ascending connections conveying prediction errors in granular cortex (cf. Feldman-Barrett and Simmons, 2015). Alternatively, as suggested by analogous active inference schemes for motor control, this type of prediction error signal could temporarily “switched off” or attenuated during action execution by reducing precision (Adams et al., 2012, 2013a). It is questionable, however, whether this proposed mechanism could also apply to allostatic control, given the continuous presence of interoactions and the much longer time scales on they unfold (e.g., hormonal or immunological regulation).

Our Bayesian account of homeostatic control (see Equations 8–12 above) led to a definition of dyshomeostasis as a state of elevated interoceptive surprise, a deviation from precise prior expectations about bodily state that is indexed by increased precision-weighted prediction errors about viscerosensory inputs. The closed perception-action loop of homeostatic inference and control shown by Figure 7 indicates that, in addition to peripheral causes residing in the body itself, structural lesions (e.g., due to demyelinating processes in MS) or functional impairments (e.g., due to inflammation in depression) in either afferent or efferent branches of this circuit could lead to chronic dyshomeostasis. We now turn to some implications of this view for specific domains of cognition and emotion: fatigue and depression.

Interoceptive surprise plausibly has general and major consequences for cognition and emotion—even in interoceptive domains that may be operating outside immediate awareness (e.g., levels of certain hormones, cytokines, or metabolites). As noted above, surprise is equivalent to negative log model evidence, and persistently high surprise is the hallmark of a bad model. A chronic state of perceived dyshomeostasis indicates that the brain's generative model of viscerosensory inputs has low evidence—either because it generates bad predictions or because it cannot transform them (with sufficient confidence) into homeostasis-restoring actions. In other words, persistently high interoceptive surprise represents a fundamental warning sign that the brain presently cannot adequately control perturbations of potential relevance for survival. This leads us to a key hypothesis of this paper—that “enduring dyshomoeostasis induces high-order beliefs about lack of control and low self-efficacy” (Stephan et al., 2016a).

Self-efficacy is a concept of self-evaluation and behavioral change which holds that humans not only have expectations with regard to the outcome of chosen actions, but also self-oriented expectations concerning whether they can successfully execute these actions (Bandura, 1977). Self-efficacy can be defined as an individual's expectation of personal mastery and control: an individual with high self-efficacy believes that he/she can successfully perform the cognitive and motor operations required to overcome negative situations (e.g., obstacles, adversaries, threats, and aversive experiences). The construct of self-efficacy is thus closely related to concepts of metacognition (for review, see Clark and Dumas, 2015). Theoretical and empirical work suggests that low levels of perceived self-efficacy prevent the deployment of adequate coping behavior and may constitute an important component in the pathogenesis of depression and anxiety (Rosenbaum and Hadari, 1985; Bandura et al., 1996, 1999; Arnstein et al., 1999).